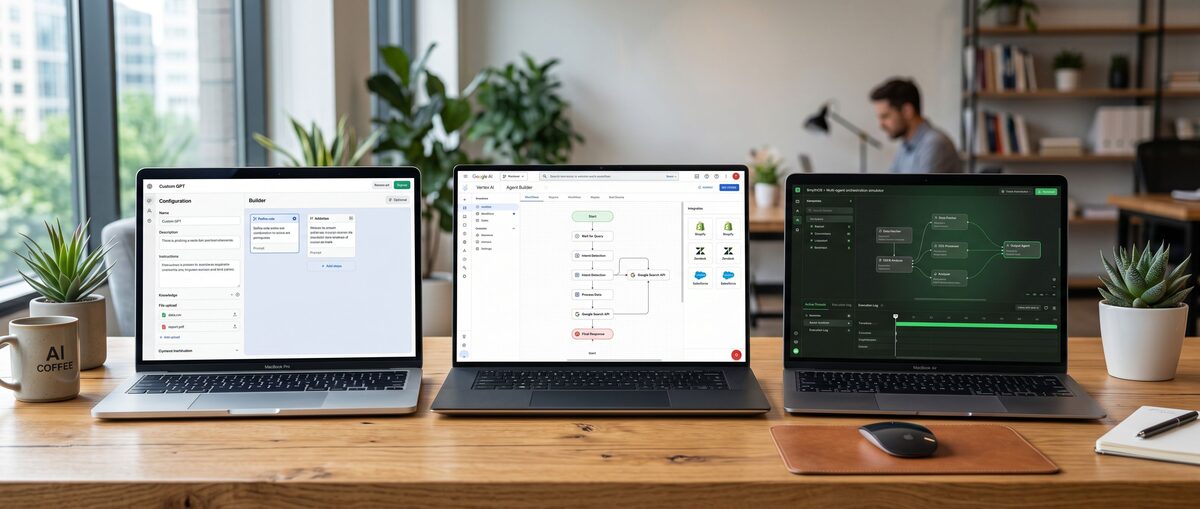

The AI development landscape has fundamentally shifted in 2026, with multi-agent systems becoming the new standard for sophisticated AI applications. But connecting these systems to real-world data sources has remained frustratingly complex—until now. The Model Context Protocol (MCP) is revolutionizing how developers build AI systems by providing a universal standard for LLM integrations that eliminates vendor lock-in and reduces development overhead by up to 70%.

Developed by Anthropic as an open-source solution, MCP acts as a "universal remote" for AI systems, enabling seamless connections between language models and external tools without the traditional headaches of custom API integrations. Whether you're building code review systems, data analysis pipelines, or sophisticated AI agent frameworks, understanding MCP is essential for any serious AI developer in 2026.

What is MCP Protocol and Why It Matters for Multi-Agent AI in 2026

The Model Context Protocol (MCP) is an open-source standardization protocol that enables secure, standardized connections between large language models and external data sources through a client-server architecture. Unlike traditional API integrations that require custom code for each tool, MCP provides a universal interface that works across different AI systems and eliminates the need for repetitive integration work.

Understanding the Model Context Protocol Architecture

MCP operates on a four-layer architecture that separates concerns and enables maximum flexibility. The host layer includes AI applications like Claude Desktop, Cursor IDE, or VS Code Copilot that users interact with directly. These hosts embed MCP clients that handle protocol communication and capability discovery.

The MCP servers expose specific integrations—think GitHub repositories, PostgreSQL databases, or web scraping tools—while the transport layer manages communication between clients and servers. This separation means you can build one server that works with any MCP-compatible host, dramatically reducing development time.

What makes this architecture powerful is its standardized handshake process. When a client connects to a server, they automatically discover available capabilities, negotiate permissions, and establish secure communication channels—all without custom configuration for each integration.

MCP vs Traditional API Integration Approaches

Traditional API integrations require developers to write custom code for each tool, manage API keys, handle different authentication methods, and maintain separate codebases for each LLM provider. This approach creates vendor lock-in and scales poorly as your system grows.

MCP eliminates these problems by providing a standardized interface. Instead of writing custom integrations for GitHub, Slack, and your database separately, you build MCP servers once and connect them to any compatible AI system. The protocol handles authentication, capability discovery, and data formatting automatically.

Performance benefits are substantial. Traditional approaches often require multiple API calls and complex data transformation pipelines. MCP enables sub-second context integration, with most operations completing in under 200ms according to Anthropic's internal testing.

Key Benefits for Multi-Agent AI Systems

Multi-agent systems particularly benefit from MCP's standardization. When building systems where multiple AI agents need access to the same external tools, MCP prevents the exponential complexity growth that traditional approaches create.

The protocol's sampling feature enables agentic workflows where servers can request LLM completions from clients without managing API keys. This is crucial for building autonomous systems that can initiate their own AI interactions based on external events or data changes.

Permission controls ensure user safety while maintaining system flexibility. Users can grant or deny specific tool access on a per-operation basis, creating secure multi-agent environments that maintain human oversight where needed.

MCP Protocol Core Components and Architecture Deep Dive

MCP consists of four main components: hosts (user-facing applications), clients (embedded protocol handlers), servers (tool/data providers), and transports (communication mechanisms). This architecture enables standardized communication between any LLM and external tools through well-defined interfaces and capability discovery.

Understanding Hosts, Clients, and Servers

Hosts are the applications users interact with directly—Claude Desktop, Cursor IDE, VS Code with Copilot, or custom AI applications. These hosts embed MCP clients that handle all protocol communication, capability discovery, and user permission management.

MCP servers are where the magic happens. Each server exposes specific capabilities like GitHub operations, database queries, or file system access. Servers can run locally (connected via STDIO) or remotely (via HTTP+SSE), depending on your security and performance requirements.

The beauty of this architecture is its composability. You can connect multiple servers to a single client, enabling rich multi-tool workflows. A data analysis agent might simultaneously connect to PostgreSQL servers, GitHub servers, and web scraping servers, all through the same standardized interface.

Transport Mechanisms: STDIO vs HTTP+SSE

MCP supports two primary transport mechanisms, each optimized for different use cases. STDIO transport is perfect for local development and trusted environments. It's faster, simpler to debug, and doesn't require network configuration.

HTTP with Server-Sent Events (SSE) enables remote server deployments and is essential for production systems. This transport supports streaming responses, making it ideal for long-running operations like large file processing or complex database queries.

Choose STDIO for development, personal tools, and situations where the server runs on the same machine as the client. Use HTTP+SSE for production deployments, shared servers, or when you need to scale server capacity independently from client applications.

Core Primitives: Tools, Resources, and Prompts

MCP defines three core primitives that standardize how AI systems interact with external capabilities. Tools are executable functions that perform actions—think "create GitHub issue," "query database," or "send email." Tools can accept parameters and return structured results.

Resources represent data sources like files, database records, or API responses. Resources are read-only and provide context to the AI system. They're perfect for feeding documentation, logs, or real-time data into your AI workflows.

Prompts are predefined templates that servers can expose to clients. These enable consistent interaction patterns and help users leverage complex tools without memorizing specific syntax or parameters.

Setting Up Your MCP Development Environment in 2026

Setting up MCP development requires installing language-specific SDKs, configuring compatible hosts like Claude Desktop or Cursor, and establishing proper development tooling for testing and debugging. The entire setup process typically takes 15-20 minutes for experienced developers.

SDK Installation Across Programming Languages

MCP's 2026 SDKs provide mature support across major programming languages. For Python developers, install with pip install modelcontext, which includes the complete server and client libraries plus debugging tools.

TypeScript developers can use npm install @modelcontext/sdk for the most comprehensive SDK experience. The TypeScript SDK includes excellent type definitions, making development faster and reducing runtime errors.

Java developers can add MCP support through Maven or Gradle dependencies, while C# developers can install via NuGet packages. All SDKs maintain API parity, so you can choose based on your team's expertise rather than feature availability.

Configuring Claude Desktop and IDE Integrations

Claude Desktop configuration is straightforward through the MCP settings panel. Add your local servers by specifying the executable path and any required arguments. The configuration automatically handles STDIO transport setup and permission management.

For AI code generators like Cursor or VS Code Copilot, MCP integration happens through extension settings. Most IDEs auto-discover local MCP servers and prompt users to enable specific capabilities.

Testing your configuration is simple: create a basic "hello world" server and verify it appears in your host's available tools list. Proper setup should show server capabilities within seconds of starting the connection.

Development Tools and Testing Setup

The MCP development experience includes robust debugging tools. The TypeScript SDK includes a development server that provides detailed logging, capability inspection, and real-time protocol message monitoring.

Set up automated testing using the included test frameworks. The Python SDK includes pytest fixtures for testing server implementations, while TypeScript provides Jest integration for comprehensive testing workflows.

Version control integration is crucial for MCP development. Store server configurations in your project repository and use environment variables for sensitive settings like database connections or API endpoints.

Building Your First MCP Server: Step-by-Step Tutorial

Creating your first MCP server involves initializing a project with the appropriate SDK, implementing tool handlers, and configuring transport mechanisms. Following the "30-minute zero-to-working server" approach, most developers can have a functional server running within half an hour.

Creating a Basic MCP Server in TypeScript

Start by creating a new TypeScript project and installing the MCP SDK:

bash

npm init -y

npm install @modelcontext/sdk typescript ts-node

npx tsc --init

Create your server file with the basic structure:

typescript

import { Server } from '@modelcontext/sdk/server';

import { StdioServerTransport } from '@modelcontext/sdk/server/stdio';

const server = new Server(

{

name: "my-first-server",

version: "1.0.0"

},

{

capabilities: {

tools: {}

}

}

);

This basic setup creates a server that can handle the MCP handshake and capability discovery. The server announces its name, version, and available capabilities to connecting clients.

Implementing Tools and Resource Handlers

Add your first tool by implementing a handler function:

typescript

server.setRequestHandler(ListToolsRequestSchema, async () => {

return {

tools: [{

name: "get_current_time",

description: "Get the current time",

inputSchema: {

type: "object",

properties: {}

}

}]

};

});

server.setRequestHandler(CallToolRequestSchema, async (request) => {

if (request.params.name === "get_current_time") {

return {

content: [{

type: "text",

text: new Date().toISOString()

}]

};

}

throw new Error(Unknown tool: ${request.params.name});

});

This implementation creates a simple time-reporting tool that demonstrates the basic tool pattern: declare capabilities in the tools list, then handle execution in the call tool handler.

Testing and Debugging Your Server

Start your server and test the connection:

bash

ts-node server.ts

Connect from Claude Desktop or your chosen host to verify the handshake completes successfully. Check that your tool appears in the available tools list and executes correctly when called.

Use the built-in logging to debug issues:

typescript

server.onError((error) => {

console.error("Server error:", error);

});

Common issues include incorrect schema definitions, missing error handling, and transport configuration problems. The SDK's error messages are designed to guide you toward solutions quickly.

Advanced MCP Features for Multi-Agent Systems

Advanced MCP features include sampling for agentic workflows, comprehensive permission controls, and sophisticated integrations with popular development tools. These capabilities enable building production-grade multi-agent systems that can operate autonomously while maintaining appropriate human oversight.

Implementing Sampling for Agentic Workflows

Sampling enables MCP servers to request LLM completions from connected clients, creating powerful agentic workflows. This feature is particularly valuable for building autonomous systems that can initiate AI interactions based on external events.

typescript

// Server can request AI completion from client

const samplingResult = await server.requestSampling({

messages: [{

role: "user",

content: "Analyze this error log and suggest fixes: " + errorLog

}]

});

This capability enables servers to act as intelligent intermediaries, processing external data and requesting AI analysis when needed. It's perfect for building monitoring systems that can automatically diagnose issues or data processing pipelines that adapt based on content analysis.

The sampling feature eliminates the need for servers to manage their own AI API keys, simplifying deployment and reducing security concerns. Clients handle all AI interactions while servers focus on domain-specific logic.

Permission Controls and Security Best Practices

MCP's permission system provides granular control over tool access. Users can approve or deny specific operations, creating secure multi-agent environments that maintain human oversight where appropriate.

Implement permission-aware tools by checking user approval before executing sensitive operations:

typescript

server.setRequestHandler(CallToolRequestSchema, async (request) => {

if (request.params.name === "delete_file") {

// This will prompt user for permission

const approved = await requestUserPermission(

"Delete file: " + request.params.arguments.filename

);

if (!approved) {

throw new Error("Operation not approved by user");

}

// Proceed with file deletion }

});

Security best practices include validating all inputs, implementing proper error handling, and using least-privilege principles for file system and network access. Never trust client-provided data without validation.

Building Custom Integrations (GitHub, PostgreSQL, Web Scraping)

Real-world MCP servers often integrate with popular development tools. GitHub integration enables AI systems to read repositories, create issues, and manage pull requests:

typescript

// GitHub integration example

server.setRequestHandler(CallToolRequestSchema, async (request) => {

if (request.params.name === "create_github_issue") {

const { title, body, repo } = request.params.arguments;

const response = await fetch(`https://api.github.com/repos/${repo}/issues`, {

method: 'POST',

headers: {

'Authorization': `token ${process.env.GITHUB_TOKEN}`,

'Content-Type': 'application/json'

},

body: JSON.stringify({ title, body })

});

return { content: [{ type: "text", text: "Issue created successfully" }] }; }

});

Database integrations follow similar patterns, with proper connection pooling and query validation. Web scraping servers should implement rate limiting and respect robots.txt files to ensure responsible data collection.

MCP Protocol vs Alternatives: When to Choose What in 2026

MCP excels in standardization and portability for multi-agent AI systems, while traditional approaches may be better for simple, single-purpose integrations. Understanding when to choose each approach depends on your specific requirements for scalability, maintenance overhead, and vendor independence.

MCP vs Traditional Function Calling

Traditional function calling requires custom integration code for each LLM provider and tool combination. While this approach offers maximum control, it creates significant maintenance overhead and vendor lock-in.

| Aspect | MCP Protocol | Traditional Function Calling |

|---|---|---|

| Setup Time | 30 minutes for basic server | 2-4 hours per integration |

| Vendor Lock-in | None (open standard) | High (provider-specific) |

| Maintenance | Single codebase | Multiple integrations |

| Scalability | Excellent (reusable servers) | Poor (linear complexity) |

| Learning Curve | Moderate (new concepts) | Low (familiar patterns) |

Choose traditional function calling for simple, one-off integrations where you need maximum control and don't plan to support multiple AI providers. MCP is better for any system that might grow or need to support different AI tools.

MCP vs OpenAI Tools and Other Protocols

OpenAI's tools API and similar provider-specific solutions offer tight integration with their respective platforms but lack the universal compatibility that MCP provides.

Compared to ChatGPT vs Claude vs Gemini integration approaches, MCP offers consistent behavior across all providers. You write your integration once and it works with any MCP-compatible AI system.

Performance-wise, MCP's sub-second integration times are competitive with provider-specific solutions while offering significantly better portability. The protocol's streaming support through HTTP+SSE ensures responsive user experiences even with complex operations.

Decision Framework for Your Use Case

Choose MCP when you need:

Multi-provider AI support

Complex multi-agent workflows

Long-term maintainability

Standardized tool integration

Vendor independence

Choose traditional approaches when you have:

Simple, single-purpose integrations

Existing provider-specific infrastructure

Team expertise in specific platforms

Tight performance requirements for simple operations

The decision often comes down to long-term strategy. If you're building AI systems that need to evolve and integrate with multiple tools, MCP's standardization benefits outweigh the initial learning curve.

Real-World MCP Implementation Examples and Case Studies

Successful MCP implementations demonstrate significant improvements in development velocity and system maintainability across diverse use cases. These real-world examples show how organizations are leveraging MCP to build sophisticated AI systems with reduced complexity.

Multi-Agent Code Review System

A software development company built a multi-agent code review system using MCP that reduced review time by 60% while maintaining code quality standards. The system connects multiple specialized agents through standardized MCP interfaces.

The security analysis agent connects to GitHub repositories through an MCP server, scanning for vulnerabilities and security anti-patterns. A code quality agent uses the same GitHub server to analyze style, complexity, and maintainability metrics.

A documentation agent generates and updates README files, API documentation, and inline comments based on code changes. All agents share the same MCP GitHub server, eliminating duplicate integration code and ensuring consistent repository access patterns.

Performance metrics show the system processes pull requests 3x faster than traditional approaches while providing more comprehensive analysis. The standardized MCP interface enabled rapid addition of new analysis capabilities without modifying existing agent code.

Data Analysis Pipeline with External Sources

A financial services firm implemented an MCP-based data analysis pipeline that connects real-time market data, internal databases, and external APIs through standardized interfaces. The system

Related Resources

Explore more AI tools and guides

About the Author

Rai Ansar

Founder of AIToolRanked • AI Researcher • 200+ Tools Tested

I've been obsessed with AI since ChatGPT launched in November 2022. What started as curiosity turned into a mission: testing every AI tool to find what actually works. I spend $5,000+ monthly on AI subscriptions so you don't have to. Every review comes from hands-on experience, not marketing claims.