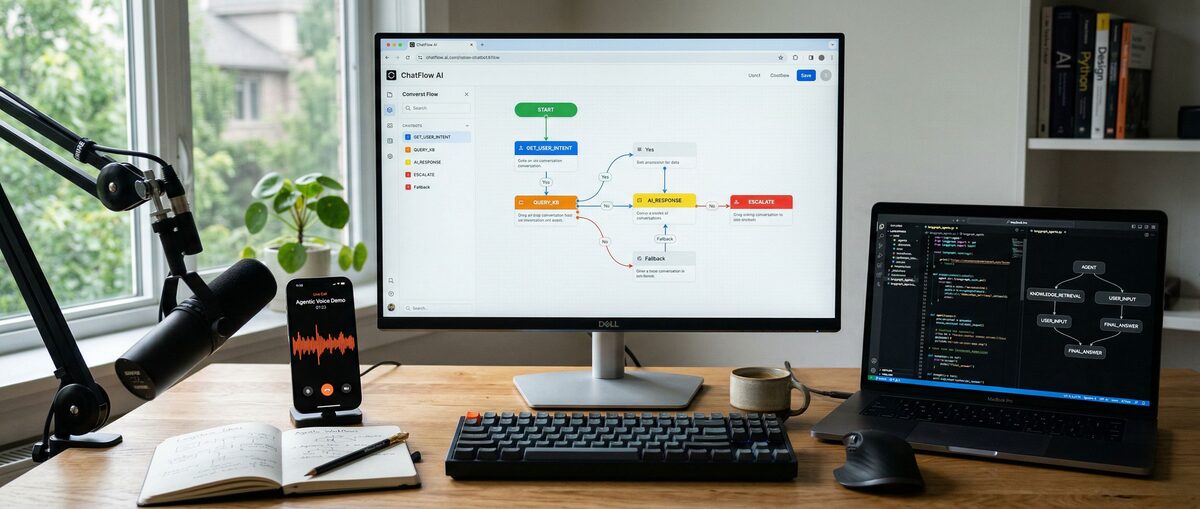

ComfyUI is a free, open-source node editor for Stable Diffusion. Users connect visual blocks to build image generation workflows without code. The platform supports over 1,000 custom node packages and enables advanced techniques like inpainting, LoRA integration, and SDXL model usage through its visual interface.

What is ComfyUI and how does it work?

ComfyUI is a node-based graphical interface for Stable Diffusion that lets users build custom AI image workflows by connecting visual blocks instead of writing code. Each node performs a specific function like loading models, encoding text prompts, or processing images, and users connect them with virtual wires to create reproducible workflows.

The platform operates on a visual workflow system where rectangular nodes represent different functions. Users drag connections between output dots (right side) and input dots (left side) to create data flow paths. Each workflow saves as a JSON file, ensuring identical results when shared or reused.

ComfyUI supports all major Stable Diffusion models including SD 1.5, SDXL, and custom fine-tuned checkpoints. The interface provides granular control over sampling methods, CFG scales, step counts, and advanced features like ControlNet guidance and LoRA style modifications.

The community created over 1,000 custom node packages in 2026, extending functionality beyond basic text-to-image generation. These additions include video generation, advanced image editing, batch processing, and AI-assisted workflow optimization tools.

Why should beginners choose ComfyUI over other AI image tools?

ComfyUI provides unlimited workflow customization, perfect reproducibility through JSON saves, and access to 1,000+ community nodes, while traditional interfaces limit users to preset options and basic parameter adjustments. The visual node system makes complex AI concepts intuitive without requiring coding knowledge.

| Feature | ComfyUI | Automatic1111 |

|---|---|---|

| Learning Curve | 2-3 hours for basics, visual workflow building | 30 minutes, familiar web interface |

| Workflow Customization | Unlimited node combinations | Limited to built-in features |

| Reproducibility | Perfect JSON workflow saving | Basic parameter saving only |

| Advanced Features | Native inpainting, LoRA, SDXL, ControlNet | Extension-dependent functionality |

| Community Support | 1,000+ custom node packages | 500+ extensions available |

| Best Use Case | Complex workflows, consistent results | Quick generation, casual use |

ComfyUI excels at creating reusable workflows for specific tasks. Users build templates for portrait generation, landscape creation, or product photography, then apply them consistently across projects. This approach eliminates repetitive parameter adjustments and ensures quality consistency.

The visual nature of node connections makes complex concepts accessible. Users see exactly how data flows through their workflow, making troubleshooting and optimization straightforward. Error messages point to specific nodes, and the community provides extensive workflow examples for learning.

How do you install ComfyUI in 2026?

The portable version provides the fastest ComfyUI installation, taking approximately 5 minutes to download and run without complex setup requirements. Cloud installations offer immediate browser access for users without powerful GPUs, while terminal installation provides maximum customization control.

Portable Installation Method

The portable installation works on Windows systems with 8GB+ RAM and requires no technical setup:

Download the portable package from the official ComfyUI GitHub repository (approximately 4GB)

Extract the zip file to your desired location (requires 10GB free space)

Run the executable file to launch ComfyUI automatically

Access the interface through your browser at localhost:8188

This method includes Python, PyTorch, and all necessary dependencies. The entire process completes in under 5 minutes on broadband internet connections.

Cloud Installation Options

Cloud services eliminate hardware requirements and provide instant access:

RunPod offers pre-configured ComfyUI templates starting at $0.34/hour. One-click deployment includes popular models pre-loaded, scales from basic to high-end GPU configurations, and includes ComfyUI Manager with essential custom nodes.

Cephalon.ai provides managed ComfyUI hosting with monthly plans starting at $29/month for unlimited usage. Features include automatic updates, model management, and built-in sharing capabilities.

RunComfy delivers browser-based ComfyUI access with pay-per-generation pricing starting at $0.01 per image. No setup required, works on any device with internet, and includes access to premium models and nodes.

Terminal Installation Process

Manual installation offers maximum control for users comfortable with command-line interfaces:

Install Python 3.8+ and Git on your system

Clone the repository:

git clone https://github.com/comfyanonymous/ComfyUI.gitInstall dependencies:

pip install -r requirements.txtDownload models to the checkpoints directory

Launch ComfyUI:

python main.py

System Requirements:

Minimum: 8GB RAM, DirectX 11 GPU with 4GB VRAM

Recommended: 16GB+ RAM, RTX 3060 or better with 8GB+ VRAM

Storage: 20GB+ free space for models and outputs

How do you navigate the ComfyUI interface?

ComfyUI's interface consists of a node canvas where users build workflows by connecting rectangular blocks with virtual wires, controlled through mouse and keyboard shortcuts. The main workspace functions like a digital whiteboard with zoom, pan, and organization capabilities.

Essential Navigation Controls

Master these controls for efficient ComfyUI navigation:

Canvas Movement:

Mouse wheel: Zoom in/out on the canvas

Space + drag: Pan around large workflows

Ctrl/Cmd + 0: Reset zoom to fit all nodes

Node Management:

Left-click: Select individual nodes

Ctrl/Cmd + drag: Select multiple nodes

Shift + drag: Move selected nodes together

Delete key: Remove selected nodes

Connection System:

Drag from output dots: Create connections between nodes

Click on wires: Select and delete connections

Right-click on nodes: Access context menus and options

Understanding Node Structure

Nodes are rectangular blocks that perform specific functions in ComfyUI workflows:

Node Components:

Input dots (left side): Receive data from other nodes

Output dots (right side): Send data to connected nodes

Parameters (inside): Adjustable settings for that function

Title bar: Shows node type and current status

Connection Rules:

Color coding: Different data types use different colored connections

Compatibility: Only compatible input/output types connect

Data flow: Information flows from outputs (left) to inputs (right)

The system provides immediate visual feedback when connections are invalid, helping users learn correct patterns quickly.

How does the Queue Panel work?

The Queue panel manages image generation requests and provides workflow controls:

Queue Features:

Queue Prompt: Adds current workflow to generation queue

Clear Queue: Removes all pending generations

History: Shows previous generations with exact parameters

Progress: Displays current generation status and completion time

Workflow Execution Process:

Build workflow by connecting nodes on canvas

Set parameters in each node (prompts, settings, etc.)

Click "Queue Prompt" to start generation

Monitor progress in queue panel

View results in output nodes when complete

How do you create your first ComfyUI workflow?

ComfyUI loads with a default text-to-image workflow that includes checkpoint loader, prompt encoders, sampler, and image output—providing immediate generation capability for beginners. This pre-built workflow demonstrates core concepts while producing results without additional setup.

Default Workflow Components

The default workflow includes six essential nodes for text-to-image generation:

Load Checkpoint: Loads the AI model (requires downloaded models)

CLIP Text Encode (Positive): Processes main prompt descriptions

CLIP Text Encode (Negative): Handles negative prompts (things to avoid)

KSampler: Core generation engine with quality settings

VAE Decode: Converts AI output to viewable images

Save Image: Outputs final results to specified folder

This workflow follows the standard Stable Diffusion pipeline, making it an excellent learning foundation. Each node represents a crucial step in AI image generation.

Core Node Configuration

Load Checkpoint Node:

Purpose: Loads chosen AI model

Key Setting: Model selection dropdown

Common Models: Stable Diffusion 1.5, SDXL, custom fine-tuned models

CLIP Text Encode Nodes:

Positive Prompt: Describes desired image content

Negative Prompt: Specifies elements to avoid or exclude

Tips: Use descriptive language and established prompt techniques

KSampler Configuration:

Steps: 20-50 steps generally improve quality

CFG Scale: 7-12 controls prompt adherence

Sampler Method: DPM++ 2M Karras provides reliable results

Scheduler: Normal works well for most cases

Running Your First Generation

Follow these steps to generate your first image:

Check model loading: Ensure Load Checkpoint node shows valid model

Enter prompt: Click CLIP Text Encode (positive) node and type description

Set negative prompt: Add unwanted elements like "blurry, low quality"

Adjust KSampler: Start with default settings (20 steps, CFG 8)

Queue generation: Click "Queue Prompt" in interface

Wait for results: Watch progress in queue panel

First images appear in the Save Image node within 30-60 seconds, depending on hardware. If errors occur, check that all nodes connect properly and required models are installed.

For beginners exploring different AI image generation approaches, our Best AI Image Generators 2026 guide compares ComfyUI with other popular tools to help you choose the right platform for your needs.

What are the essential ComfyUI nodes for beginners?

The most important beginner nodes include CLIP Text Encode for prompts, VAE Decode for image processing, Checkpoint Loader for models, and Save Image for outputs—these four categories handle 90% of basic workflows. Mastering these foundational nodes enables building increasingly complex workflows with confidence.

Text and Prompt Processing Nodes

Text processing nodes control how prompts influence image generation:

CLIP Text Encode:

Function: Converts text prompts into AI-readable format

Inputs: Text string and CLIP model from checkpoint

Usage: Create separate nodes for positive and negative prompts

Performance: Longer, descriptive prompts generally produce better results

Advanced Text Nodes:

Style Prompt: Applies artistic styles automatically

Prompt Builder: Combines multiple prompt elements

Random Prompt: Generates creative prompt variations

String Concatenate: Combines multiple text inputs

Text Input: Provides clean text entry interfaces

Image Processing and Output Nodes

Image nodes handle input, output, and manipulation of visual content:

Core Image Nodes:

Save Image: Outputs generated images to specified folder

Preview Image: Shows results without saving (useful for testing)

Load Image: Imports existing images for img2img workflows

Image Resize: Adjusts dimensions while maintaining aspect ratios

Advanced Processing:

VAE Encode/Decode: Converts between image and latent space

Upscale Image: Increases resolution using various algorithms

Image Blend: Combines multiple images with different blend modes

Crop Image: Extracts specific regions for focused processing

Quality Enhancement:

Image Sharpen: Improves detail clarity

Color Correction: Adjusts brightness, contrast, saturation

Noise Reduction: Cleans up generation artifacts

Model and Checkpoint Management Nodes

Model management nodes control which AI systems power generations:

Essential Model Nodes:

Load Checkpoint: Primary model loader for Stable Diffusion

LoRA Loader: Adds specialized training for specific styles or subjects

VAE Loader: Loads custom VAE models for different color/quality characteristics

Embedding Loader: Incorporates textual inversions and custom concepts

Advanced Model Features:

Model Merge: Combines different checkpoints for hybrid results

Checkpoint Switch: Allows dynamic model changing within workflows

ControlNet Loader: Enables pose, depth, and edge guidance

IP-Adapter: Incorporates reference images for style consistency

Adding New Nodes:

Double-click on empty canvas space to open node search

Right-click for context-sensitive node suggestions

Use ComfyUI Manager to install custom node packages

How do you install and use ComfyUI Manager?

ComfyUI Manager is an essential extension that provides one-click installation of custom node packages, automatic updates, and missing node detection—making it the first addition every beginner should install. This tool transforms ComfyUI from a basic interface into a comprehensive AI workflow platform.

ComfyUI Manager Installation Process

ComfyUI Manager installation takes 3-5 minutes and dramatically expands capabilities:

Installation Steps:

Navigate to ComfyUI folder and locate the

custom_nodesdirectoryOpen terminal/command prompt in the custom_nodes folder

Run clone command:

git clone https://github.com/ltdrdata/ComfyUI-Manager.gitRestart ComfyUI to activate the manager

Access via "Manager" button that appears in interface

Alternative Installation:

Download zip file directly from GitHub

Extract to

ComfyUI/custom_nodes/ComfyUI-Manager/Restart ComfyUI to enable functionality

Once installed, ComfyUI Manager adds a dedicated panel for browsing, installing, and managing thousands of community-created nodes.

What are the most important custom node packages?

Several node packages have become standard for ComfyUI users:

Impact Pack (Most Important):

Function: Provides essential utilities missing from base ComfyUI

Key Nodes: Better image preview, batch processing, advanced samplers

Installation: One-click through ComfyUI Manager

Benefits: Fixes common workflow pain points

ControlNet Nodes:

Purpose: Adds pose, depth, edge, and other guidance controls

Popular Packages: ComfyUI-ControlNet-Aux, sd-webui-controlnet

Use Cases: Character posing, architectural layouts, style transfer

Learning Curve: Moderate difficulty, extremely powerful results

Animation and Video Packages:

AnimateDiff: Creates short video clips from static workflows

Video Helper Suite: Handles video input/output and frame processing

Motion Brush: Adds motion control to specific image regions

Quality Enhancement Nodes:

Ultimate SD Upscale: Advanced upscaling with multiple algorithms

Face Restore: Improves facial details in generated images

Background Removal: Automatic subject isolation tools

FAQ

Q: How long does it take to learn ComfyUI basics?

A: Most beginners master basic text-to-image workflows within 2-3 hours of practice. Advanced techniques like ControlNet and custom nodes require 1-2 weeks of regular use.

Q: What computer specs do I need to run ComfyUI?

A: Minimum requirements include 8GB RAM and a DirectX 11 GPU with 4GB VRAM. Recommended specs are 16GB+ RAM and RTX 3060 or better with 8GB+ VRAM for smooth operation.

Q: Can I use ComfyUI without a powerful GPU?

A: Yes, cloud services like RunPod ($0.34/hour), Cephalon.ai ($29/month), and RunComfy ($0.01/image) provide GPU access through your browser without local hardware requirements.

Q: How do I fix "missing nodes" errors in downloaded workflows?

A: Use ComfyUI Manager to automatically detect and install missing custom nodes. The manager identifies required packages and installs them with one click.

Q: What's the difference between ComfyUI and Automatic1111?

A: ComfyUI offers unlimited workflow customization and perfect reproducibility through visual node connections, while Automatic1111 provides a simpler web interface with limited customization options.

Q: Where can I find ComfyUI workflows to download?

A: Popular sources include the ComfyUI subreddit, CivitAI workflow section, OpenArt community, and the official ComfyUI examples repository on GitHub.

Q: How do I save and share my ComfyUI workflows?

A: Workflows save automatically as JSON files. Use the "Save" button in the interface or drag workflows from the browser to save locally. Share JSON files with others for identical reproduction.

Q: Can ComfyUI generate videos and animations?

A: Yes, with custom nodes like AnimateDiff and Video Helper Suite. These extensions enable short video generation, frame interpolation, and motion control within ComfyUI workflows.

Related Resources

Explore more AI tools and guides

Fine-Tuning LLM Guide 2026: Ultimate Step-by-Step Tutorial for Custom Model Training and Optimization

How to Use AI for Studying in 2026: Ultimate Guide with Claude Haiku, Elicit, and Tools for Building Databases and Academic Research

How to Build an AI Chatbot in 2026: Ultimate Tutorial with No-Code Tools, Custom LLMs & Voice Integration

Best No-Code AI Agent Builders 2026: Ultimate Hands-On Review of Top Platforms for Effortless Autonomous Agents and Workflow Automation

Best AI Code Review Tools 2026: Ultimate Hands-On Review of Top Platforms for Automated Code Analysis, Bug Detection, and Developer Collaboration

More tutorials articles

About the Author

Rai Ansar

Founder of AIToolRanked • AI Researcher • 200+ Tools Tested

I've been obsessed with AI since ChatGPT launched in November 2022. What started as curiosity turned into a mission: testing every AI tool to find what actually works. I spend $5,000+ monthly on AI subscriptions so you don't have to. Every review comes from hands-on experience, not marketing claims.