The Model Context Protocol (MCP) is an open standard that enables secure connections between AI applications and data sources. MCP eliminates custom integrations by providing standardized server-client architecture. Major AI platforms including Cursor, Claude Desktop, and JetBrains IDEs support MCP as of 2026.

What is the Model Context Protocol and why does it matter in 2026?

The Model Context Protocol is an open standard that enables secure, two-way connections between AI applications and data sources, eliminating the need for custom integrations between every tool and service. MCP reduces integration complexity from exponential to linear scaling through standardized server-client architecture.

MCP functions as the "REST API moment" for AI tool integration. REST APIs standardized web service communication in the 2010s. MCP standardizes how AI applications access external data and capabilities in the 2020s.

The November 2025 specification update addressed production concerns including authentication extensions, server identity verification, and security controls. These enhancements moved MCP from experimental technology to enterprise-ready infrastructure.

MCP operates through three components. MCP servers expose capabilities like database access, file operations, or API integrations. A PostgreSQL server enables AI tools to query databases. A GitHub server facilitates code review workflows.

MCP clients are AI applications that connect to servers. Popular clients include Cursor for code editing, Claude Desktop for AI assistance, Windsurf for development workflows, and JetBrains IDEs for integrated development.

The protocol layer handles communication between clients and servers. It manages discovery, authentication, capability negotiation, and request-response patterns. This standardized communication eliminates custom integration code.

Before MCP, connecting 10 AI tools to 10 data sources required 100 custom integrations. Each connection needed unique code, ongoing maintenance, and updates when systems changed. This exponential complexity made comprehensive AI tool adoption impractical.

MCP transforms exponential problems into linear scaling. The same 10 tools and 10 services need only 20 implementations total—10 servers and 10 clients. Each new tool or service adds one integration point rather than requiring connections to everything else.

Traditional integration approaches rely on proprietary APIs and custom connectors. Each AI tool vendor creates unique integration methods. Developers must learn multiple systems and maintain separate codebases. This fragmentation limits tool interoperability and increases technical debt.

MCP provides a vendor-neutral alternative that works across platforms. Tools supporting MCP automatically integrate with any MCP server. This composability enables developers to choose AI tools based on capabilities rather than integration constraints.

How does MCP protocol architecture work with servers, clients, and communication patterns?

MCP architecture consists of servers that expose capabilities, clients that consume those capabilities, and a standardized protocol layer that handles secure communication between them. This design enables any MCP-compatible AI tool to work with any MCP server without custom integration code.

The protocol operates on request-response patterns similar to HTTP. Clients discover server capabilities, negotiate authentication, and execute actions through standardized message formats. This consistency makes MCP implementations predictable and maintainable.

Transport protocols include stdio for local connections, HTTP for remote access, and WebSocket for real-time interactions. This flexibility allows MCP to work in local development and distributed cloud architectures.

MCP servers act as bridges between AI tools and existing systems. A PostgreSQL MCP server enables AI applications to query databases, analyze data patterns, and generate insights from live business data. The server handles query optimization, security controls, and result formatting.

GitHub MCP servers integrate AI tools with code repositories. They enable automated code reviews, documentation generation, and issue management. AI assistants read code, suggest improvements, and create pull requests through standardized MCP interactions.

Filesystem MCP servers provide controlled access to local and remote file systems. They enable AI tools to read documentation, analyze logs, and process data files while maintaining access controls. This capability supports AI-powered development workflows.

Cursor leads MCP adoption among code editors with built-in server discovery and configuration management. Developers connect Cursor to codebases, databases, and development tools through configuration changes. This integration enables context-aware code suggestions and automated development tasks.

Claude Desktop provides comprehensive MCP support for AI assistance. Users configure multiple MCP servers to give Claude access to files, databases, and external services. This transforms Claude from a text-based assistant into an automation platform.

JetBrains IDEs integrate MCP support across their product line. IntelliJ IDEA, PyCharm, and other JetBrains tools connect to MCP servers for enhanced development workflows. This integration supports database-driven applications and API development.

The MCP protocol layer handles server discovery through standardized capability announcements. When clients connect to servers, they receive detailed information about available tools, resources, and access requirements. This discovery process eliminates manual configuration and reduces setup complexity.

Authentication in MCP uses extensible mechanisms that support various security models. The November 2025 specification added authentication extensions supporting OAuth, API keys, and certificate-based authentication. These extensions ensure secure access while maintaining protocol simplicity.

Capability negotiation allows clients and servers to agree on supported features and access levels. Servers expose read-only or read-write access to different resources based on client permissions. This granular control enables secure multi-tenant deployments.

How do you implement MCP step-by-step for beginners?

Getting started with MCP requires selecting an initial server, configuring your AI client, testing the connection, and gradually expanding capabilities based on your specific use cases. This four-step approach minimizes complexity while building practical experience with the protocol.

Most developers start with the filesystem server because it provides immediate benefits without complex external dependencies. Once you understand basic patterns, adding database or API integrations becomes straightforward.

System requirements include Python 3.10+ or Node.js 16+ depending on your implementation language. Most MCP servers support both Python and TypeScript. Windows, macOS, and Linux are supported platforms.

Development environment setup follows consistent patterns across operating systems. macOS users install Python and Node.js through Homebrew. Ubuntu users leverage apt packages. Windows developers use official installers or Windows Subsystem for Linux.

Package managers streamline MCP installation. Python developers use pip or the uv package manager for faster installations. JavaScript developers choose npm, yarn, or pnpm based on project requirements.

The filesystem MCP server provides secure access to local files and directories. It demonstrates core MCP concepts without requiring external services. Installation takes minutes with the right commands.

For Python installations:

bash

pip install mcp-server-filesystem

For Node.js environments:

bash

npm install @modelcontextprotocol/server-filesystem

Configuration requires specifying which directories the server can access. Create a configuration file that lists allowed paths and access permissions. This security-first approach prevents unauthorized file access while enabling AI tool functionality.

Cursor configuration involves adding MCP server details to editor settings. Navigate to Cursor Settings → MCP Servers and add your filesystem server configuration. Include the server executable path, allowed directories, and security parameters.

Claude Desktop uses a JSON configuration file in your user directory. The configuration specifies server details, transport protocols, and authentication parameters:

json

{

"mcpServers": {

"filesystem": {

"command": "mcp-server-filesystem",

"args": ["/path/to/allowed/directory"],

"env": {}

}

}

}

JetBrains IDE configuration follows similar patterns across IDEs. Access MCP settings through Preferences → Tools → MCP and add server configurations. The IDE validates configurations and shows connection status indicators.

Connection testing verifies that your AI client can communicate with MCP servers. Start with simple requests like "list files in my project directory" or "read the README file." Successful connections show immediate results with file listings or content display.

How do you build custom MCP servers using Python and TypeScript?

Custom MCP servers enable integration with proprietary systems, internal APIs, and specialized data sources that don't have existing server implementations. The official SDKs provide frameworks for building production-ready servers with minimal boilerplate code.

Server development follows established patterns regardless of implementation language. You define capabilities, implement request handlers, and configure security controls. The SDKs handle protocol details, allowing focus on business logic and integration requirements.

Python MCP development leverages the official mcp package for protocol handling and server infrastructure. The SDK provides decorators, type hints, and utility functions that simplify server implementation:

python

from mcp import McpServer, Tool, Resource

from mcp.types import TextContent

app = McpServer("my-custom-server")

@app.tool("database_query")

async def execute_query(query: str) -> str:

# Your database integration logic here

result = await database.execute(query)

return f"Query result: {result}"

if name == "main":

app.run()

Database integration requires attention to security and performance. Use connection pooling for concurrent requests, implement query validation to prevent SQL injection, and consider read-only connections for most MCP use cases. The SDK includes utilities for common database operations.

TypeScript MCP servers offer type safety and integration with JavaScript ecosystems. The official SDK provides comprehensive type definitions that catch integration errors at compile time:

typescript

import { McpServer } from '@modelcontextprotocol/sdk';

const server = new McpServer({

name: 'my-custom-server',

version: '1.0.0'

});

server.addTool({

name: 'api_request',

description: 'Make HTTP requests to external APIs',

inputSchema: {

type: 'object',

properties: {

url: { type: 'string' },

method: { type: 'string' }

}

}

}, async (params) => {

// API integration logic

const response = await fetch(params.url, { method: params.method });

return await response.text();

});

Tool execution in MCP servers handles dynamic operations like database queries, API calls, or file operations. Tools receive structured input parameters and return formatted results to AI clients. Input validation prevents security vulnerabilities and ensures reliable operation.

Resource provisioning supplies static data and context to AI applications. Resources include documentation, configuration files, or reference data that AI tools need for decision-making. Unlike tools, resources are typically read-only and cached for performance.

Authentication strategies in custom MCP servers range from API keys to OAuth flows. The November 2025 specification includes authentication extensions that standardize these patterns. Choose authentication methods based on security requirements and existing infrastructure.

Authorization controls determine what actions authenticated clients can perform. Implement role-based access control (RBAC) for complex scenarios or simple read/write permissions for basic use cases. Document permission requirements to help users configure appropriate access levels.

What are real-world MCP use cases and implementation scenarios?

MCP implementations solve business problems by connecting AI tools with existing systems and workflows. The most successful deployments focus on specific use cases rather than trying to integrate everything at once. This targeted approach delivers immediate value while building expertise for future expansions.

Common implementation patterns include data analysis workflows, development automation, customer service enhancement, and content management systems. Each pattern has proven architectures and best practices that reduce implementation risk and accelerate time-to-value.

Database MCP servers transform AI tools into data analysis platforms. Instead of exporting data to spreadsheets or running manual queries, analysts ask AI assistants to investigate trends, identify anomalies, and generate insights directly from live databases.

PostgreSQL integration ranks among the most popular MCP implementations. The server supports read-only connections for safety, query optimization for performance, and result formatting for AI consumption. Analysts can ask questions like "What are our top 10 customers by revenue this quarter?" and receive immediate, accurate results.

Development workflow automation connects AI tools with code repositories, CI/CD pipelines, and project management systems. Developers use MCP-enabled AI assistants to review code, generate documentation, and manage deployments through natural language commands.

Customer service enhancement integrates AI tools with CRM systems, knowledge bases, and communication platforms. Support agents use AI assistants that can access customer history, product documentation, and escalation procedures through MCP connections.

Content management workflows connect AI tools with document repositories, media libraries, and publishing systems. Content creators use AI assistants to research topics, generate drafts, and manage publication workflows through standardized MCP interfaces.

Financial analysis systems integrate AI tools with accounting software, market data feeds, and reporting platforms. Financial analysts use AI assistants to generate reports, analyze trends, and identify investment opportunities through MCP-enabled data access.

Related Resources

Explore more AI tools and guides

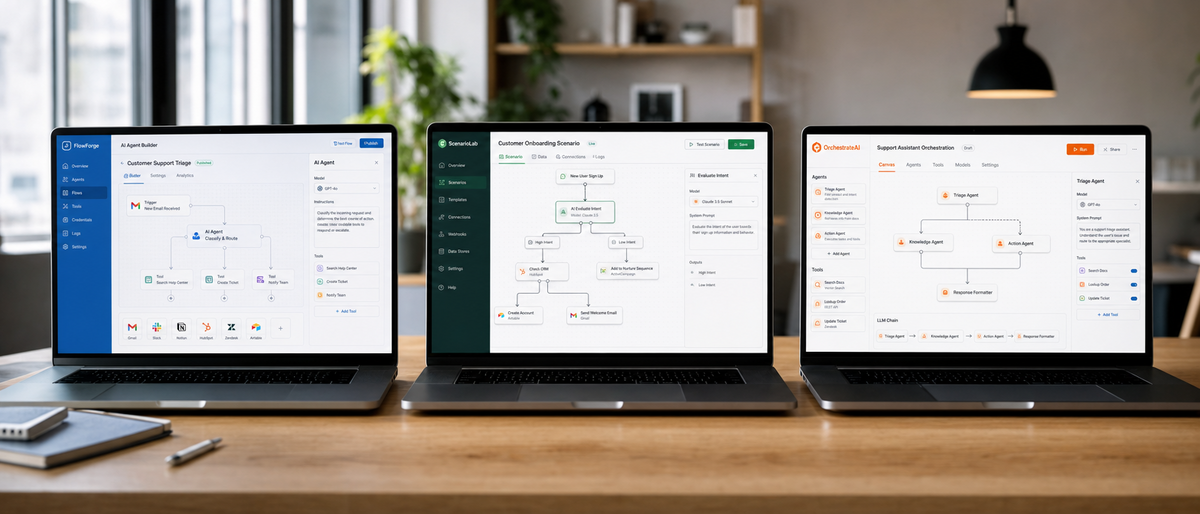

Best No-Code AI Agent Builders 2026: Ultimate Hands-On Review of Top Platforms for Effortless Autonomous Agents and Workflow Automation

AI Personal Assistant Comparison 2026: Ultimate Hands-On Review of Top Tools Including Hologram Companion Devices

Best No-Code AI Agent Builders 2026: Ultimate SmythOS vs Voiceflow vs Bubble Comparison for LLM Integration and Scalability

Best AI Code Review Tools 2026: Ultimate Hands-On Review of Top Platforms for Automated Code Analysis, Bug Detection, and Developer Collaboration

Best Free AI Headshot Generators 2026: Ultimate Hands-On Review of Top Tools for Professional Avatars and Profile Images

More ai agents articles

About the Author

Rai Ansar

Founder of AIToolRanked • AI Researcher • 200+ Tools Tested

I've been obsessed with AI since ChatGPT launched in November 2022. What started as curiosity turned into a mission: testing every AI tool to find what actually works. I spend $5,000+ monthly on AI subscriptions so you don't have to. Every review comes from hands-on experience, not marketing claims.