Ollama is a free, open-source tool that runs AI models locally on Windows, macOS, and Linux systems. Users download models like Llama 3.2:8B (4.7GB) and chat with AI without internet connectivity or subscription fees.

What are the benefits of running AI locally with Ollama in 2026?

Ollama provides complete data privacy, zero subscription costs, and offline functionality while delivering performance that rivals cloud services through local GPU acceleration and instant response times.

Privacy and Data Control

Your conversations, documents, and sensitive information never leave your computer when you run AI locally Ollama style. Cloud AI services send every query to remote servers where companies log, analyze, and use your data for training future models.

IBM's 2024 Cost of a Data Breach Report shows the average data breach costs $4.88 million globally. Local AI eliminates this risk entirely by keeping all processing on your device.

Lawyers reviewing confidential documents, developers working on proprietary code, and researchers handling sensitive data choose local AI to maintain complete control over their intellectual property.

Cost Savings vs Cloud APIs

OpenAI's GPT-4 costs $30 per million input tokens. Claude Pro charges $20 monthly for casual use. Heavy users spend $100-500 monthly on AI subscriptions.

Ollama eliminates these costs entirely after a one-time hardware investment:

Year 1 cloud costs: $240-$6,000 depending on usage

Ollama total cost: $0 (uses existing hardware)

Break-even time: Immediate

Performance and Speed Benefits

NVIDIA GPUs provide 5x speed improvements through CUDA acceleration. Apple Silicon Macs excel at running smaller models efficiently through unified memory architecture.

Local inference eliminates network latency entirely. Cloud responses take 2-5 seconds while local AI delivers instant results. This responsiveness transforms AI interaction from web service delays to natural conversation flow.

What hardware do you need to run AI locally with Ollama?

You need 8GB+ RAM minimum (16GB+ recommended), Windows 10/11 (1903+), macOS, or Linux, with optional GPU acceleration providing 5x performance boost over CPU-only processing.

Minimum vs Recommended Specs

Minimum Requirements:

8GB RAM (tight but workable)

Windows 10/11 (build 1903+), macOS 10.15+, or Linux

10GB free storage space

Internet for initial download

Recommended Setup:

16GB+ RAM for smooth multitasking

NVIDIA GPU with 8GB+ VRAM

SSD storage for faster model loading

Stable internet for model downloads

GPU Acceleration Setup

NVIDIA GPUs dramatically improve performance through CUDA acceleration. A mid-range RTX 4060 runs Llama 3.2:8B at 50+ tokens per second while CPU-only inference manages 5-10 tokens per second.

Apple Silicon Macs (M1/M2/M3) provide excellent efficiency for smaller models. The unified memory architecture runs larger models than traditional GPU setups with similar VRAM.

AMD GPU support exists but requires additional configuration. NVIDIA remains the most straightforward option for Windows users seeking maximum performance.

Storage Considerations

AI models require significant storage space:

Llama 3.2:8B: 4.7GB

Llama 3.1:70B: 40GB

Code Llama 34B: 19GB

Plan for 50-100GB if you want multiple models available. SSDs dramatically improve model loading times compared to traditional hard drives.

How do you install Ollama on your computer?

Download the 200MB installer from ollama.com, run as administrator on Windows, and verify installation with ollama --version command - the entire process takes 90 seconds to 3 minutes.

Windows Installation

Download the installer from ollama.com (approximately 200MB)

Run OllamaSetup.exe as administrator (right-click → "Run as administrator")

Follow the installation wizard - it handles PATH configuration automatically

Restart your command prompt to refresh environment variables

The installer automatically adds Ollama to your system PATH and creates desktop shortcuts. Windows SmartScreen warns about the installer - click "More info" then "Run anyway" if prompted.

macOS Installation

Download Ollama.app from the official website

Drag to Applications folder like any Mac app

Launch Ollama - it adds a menu bar icon automatically

Grant necessary permissions when macOS prompts

macOS users benefit from clean menu bar integration. The app runs silently in the background, ready to serve AI requests through the command line or compatible applications.

Linux Installation

Linux installation uses a simple one-line command:

bash

curl -fsSL https://ollama.com/install.sh ↗ | sh

This script automatically detects your distribution and installs appropriate packages. It works on Ubuntu, Debian, CentOS, and most major distributions.

For manual installation, download the binary and place it in /usr/local/bin/. The installation script handles service configuration automatically.

Verification Steps

Confirm your installation worked correctly:

bash

ollama --version

You should see version information (0.1.22 or newer as of 2026). If the command isn't recognized, restart your terminal or check your PATH configuration.

Test the server functionality:

bash

ollama serve

This starts the Ollama server on port 11434. You'll see "Ollama is running" when successful.

Which AI model should you download first with Ollama?

Start with Llama 3.2:8B using ollama pull llama3.2:8b - it provides excellent performance while requiring only 4.7GB storage and 8GB RAM.

Choosing the Right Model

Ollama supports 100+ models through its registry at ollama.com/models. For beginners, these models offer the best balance:

Llama 3.2:8B - Best general-purpose model (4.7GB)

Llama 3.1:8B - Strong reasoning capabilities (4.7GB)

Code Llama 7B - Optimized for programming tasks (3.8GB)

Mistral 7B - Fast and efficient alternative (4.1GB)

Larger models like Llama 3.1:70B provide better quality but require 40GB+ storage and 64GB+ RAM. Start small and upgrade based on your needs.

Model Download Process

Download your chosen model with a single command:

bash

ollama pull llama3.2:8b

The download takes 4-8 minutes on typical home internet (50+ Mbps). Ollama shows progress with a visual indicator:

pulling manifest

pulling 8daa9615cce9... 100% ▕██████████████▏ 4.7 GB

pulling 038aa9a13922... 100% ▕██████████████▏ 154 B

Models download in chunks for reliability. If your connection drops, Ollama resumes where it left off.

Starting Your First Chat

Launch your AI model with:

bash

ollama run llama3.2:8b

You'll see a prompt where you can start chatting immediately:

Hello! How can I help you today?

Hello! I'm an AI assistant created by Meta. I'm here to help answer questions,

provide information, assist with tasks, or just have a conversation.

What would you like to know or discuss?

Type your questions naturally. The AI responds instantly since everything runs locally. Press Ctrl+D (or type /bye) to exit the chat.

How can you optimize Ollama for better performance?

Enable GPU acceleration through proper drivers, create custom Modelfiles for specialized personas, and use the OpenAI API compatibility on port 11434 for seamless integration with existing tools.

GPU Acceleration Setup

NVIDIA GPU acceleration requires proper CUDA drivers. Download the latest drivers from nvidia.com/drivers. Ollama automatically detects and uses available GPUs when properly configured.

Verify GPU usage with:

bash

nvidia-smi

During AI inference, you should see GPU utilization spike to 80-100%. If the GPU shows 0% usage, check your driver installation.

For optimal performance:

Close unnecessary applications to free VRAM

Monitor temperatures during extended use

Update drivers regularly for best compatibility

Custom Modelfiles

Modelfiles let you create specialized AI assistants with custom personalities and instructions. Create a file called Modelfile:

FROM llama3.2:8b

PARAMETER temperature 0.7

SYSTEM """

You are a helpful coding assistant specialized in Python.

Always provide clean, well-commented code examples.

Explain complex concepts in simple terms.

"""

Build your custom model:

bash

ollama create python-tutor -f ./Modelfile

ollama run python-tutor

This creates a Python-focused assistant that maintains consistent behavior across conversations.

OpenAI API Compatibility

Ollama provides OpenAI-compatible API endpoints at http://127.0.0.1:11434. This lets you use Ollama with applications designed for OpenAI's API without code changes.

Start the server:

bash

ollama serve

Use the API endpoint in your applications:

python

import openai

client = openai.OpenAI(

base_url='http://localhost:11434/v1 ↗',

api_key='ollama', # required but unused

)

response = client.chat.completions.create(

model='llama3.2:8b',

messages=[{'role': 'user', 'content': 'Hello!'}]

)

This compatibility makes Ollama a drop-in replacement for cloud services in many applications.

How does Ollama compare to other local AI solutions?

Ollama offers the fastest setup time (5-15 minutes), CLI-focused workflow, and broadest model support with 100+ models, while alternatives like Jan.AI provide GUI interfaces and LM Studio offers advanced quantization features.

| Feature | Ollama | Jan.AI | LM Studio | Cloud APIs |

|---|---|---|---|---|

| Setup Time | 5-15 minutes | 10-20 minutes | 15-30 minutes | Instant |

| Interface | CLI + API | GUI primary | GUI primary | Web/API |

| Model Support | 100+ models | 50+ models | 80+ models | Limited |

| Cost | Free | Free | Free | $20-500/month |

| Privacy | Complete | Complete | Complete | Limited |

| GPU Support | Excellent | Good | Excellent | N/A |

| API Compatible | OpenAI format | Custom | Custom | Native |

Ollama vs Jan.AI

Jan.AI provides a user-friendly graphical interface for users who prefer visual model management. It offers similar functionality to Ollama but with a steeper learning curve for automation and scripting.

Choose Ollama if you're comfortable with command-line tools, want fastest setup, or need API compatibility.

Choose Jan.AI if you prefer visual interfaces, want built-in chat history, or are new to local AI.

Ollama vs LM Studio

LM Studio focuses on model quantization and fine-tuning capabilities. It provides more granular control over model parameters but requires more technical knowledge.

Choose Ollama if you want simple model deployment, need API compatibility, or prioritize ease of use.

Choose LM Studio if you need custom quantization, want to experiment with model variants, or require advanced tuning options.

Ollama vs Cloud APIs

Cloud services like OpenAI offer unlimited scale and cutting-edge models but sacrifice privacy and require ongoing payments. The choice depends on your specific needs:

Local AI (Ollama) wins for complete privacy and data control, zero ongoing costs after setup, offline functionality, and consistent performance.

Cloud APIs win for latest model access (GPT-4, Claude 3.5), unlimited computational resources, no hardware requirements, and global accessibility.

For most users, a hybrid approach works best: use Ollama for private/sensitive work and cloud services for tasks requiring cutting-edge capabilities.

Can you integrate Ollama with other AI applications?

Yes, Ollama's OpenAI API compatibility and server mode on port 11434 enable seamless integration with tools like OpenClaw for AI agents, Jan.AI for GUI management, and various VS Code extensions.

OpenClaw for AI Agents

OpenClaw builds sophisticated AI agent workflows on top of Ollama's foundation. Its setup wizard automatically detects your Ollama installation and configures agent templates for common tasks.

The integration enables multi-step reasoning, file manipulation, and complex workflows while maintaining complete local privacy. This combination rivals cloud-based agent platforms without the privacy concerns.

Jan.AI for GUI Interface

Jan.AI can connect to your existing Ollama installation, providing a visual interface for model management and conversations. This gives you the best of both worlds: Ollama's performance with Jan.AI's user-friendly design.

The integration maintains separate model libraries, so you can use both tools simultaneously without conflicts.

VS Code Extensions

Several VS Code extensions support Ollama for coding assistance:

Continue - Provides inline code completion and chat

CodeGPT - Integrates multiple AI providers including Ollama

Ollama Autocomplete - Dedicated Ollama extension for code suggestions

Configure these extensions to use http://localhost:11434 as the API endpoint. This gives you AI-powered coding assistance without sending your code to external services.

What should you do if Ollama installation fails?

Run the installer as administrator, ensure Ollama is added to your system PATH, bypass Windows SmartScreen warnings if needed, and verify GPU drivers for acceleration.

Installation Problems

"Command not found" errors: Restart your terminal or command prompt after installation. Windows users need to log out and back in to refresh environment variables.

Permission denied on Linux/macOS: Ensure you have proper permissions for the installation directory. The install script needs sudo access:

bash

curl -fsSL https://ollama.com/install.sh ↗ | sudo sh

Windows SmartScreen blocking: Click "More info" then "Run anyway" when Windows warns about the installer. Ollama is safe but relatively new, triggering security warnings.

Model Download Errors

Network timeouts: Slow internet can cause download failures. Ollama automatically resumes interrupted downloads, so simply retry the ollama pull command.

Insufficient disk space: Models require significant storage. Check available space with df -h (Linux/macOS) or through File Explorer (Windows).

Corrupted downloads: Delete partial downloads with ollama rm <model> then retry the pull command.

Performance Issues

Slow inference speed: Ensure GPU drivers are current and properly installed. Monitor GPU usage during inference to verify acceleration is working.

High memory usage: Close unnecessary applications before running large models. Consider using smaller model variants if your system struggles.

System freezing: Large models can overwhelm systems with insufficient RAM. Start with smaller models and gradually test larger ones.

How can you optimize your local AI setup long-term?

Regularly update models with ollama pull, monitor system resources during inference, organize models with ollama list, and understand the privacy implications of local versus cloud processing.

Model Management

Keep your models organized and updated:

bash

ollama list

ollama pull llama3.2:8b

ollama rm old-model:tag

ollama show llama3.2:8b

Regular updates ensure you have the latest model versions with improved performance and capabilities. Set a monthly reminder to update your most-used models.

Performance Optimization

Monitor system resources during AI inference to identify bottlenecks:

CPU usage should stay below 80% for responsive multitasking

RAM usage shouldn't exceed 90% of available memory

GPU temperature should remain under 83°C for sustained use

Storage I/O should use SSDs for optimal model loading speeds

Security Considerations

Local AI provides complete privacy but requires proper system security. Keep your operating system updated, use strong passwords, and enable disk encryption for sensitive work.

Regular backups protect your custom models and configurations. Store important Modelfiles in version control systems for easy recovery and sharing across devices.

Frequently Asked Questions

How much storage space do I need for Ollama models?

Plan for 50-100GB storage space. Llama 3.2:8B requires 4.7GB, Llama 3.1:70B needs 40GB, and Code Llama 34B uses 19GB. SSDs provide faster model loading compared to traditional hard drives.

Can I run multiple models simultaneously?

Yes, but each model consumes RAM and VRAM independently. Running Llama 3.2:8B (8GB RAM) and Code Llama 7B (6GB RAM) simultaneously requires 14GB+ available memory for smooth operation.

Does Ollama work without internet?

Yes, after initial model downloads. Ollama runs completely offline for inference. You only need internet connectivity to download new models or updates from the Ollama registry.

How does local AI performance compare to cloud services?

Modern GPUs like RTX 4060 deliver 50+ tokens per second with Llama 3.2:8B, matching or exceeding cloud service speeds while eliminating network latency. Response times are instant versus 2-5 seconds for cloud APIs.

Can I use Ollama for commercial projects?

Yes, Ollama itself is open-source. Check individual model licenses - most models like Llama 3.2 allow commercial use. Local deployment eliminates API costs and data privacy concerns for business applications.

Related Resources

Explore more AI tools and guides

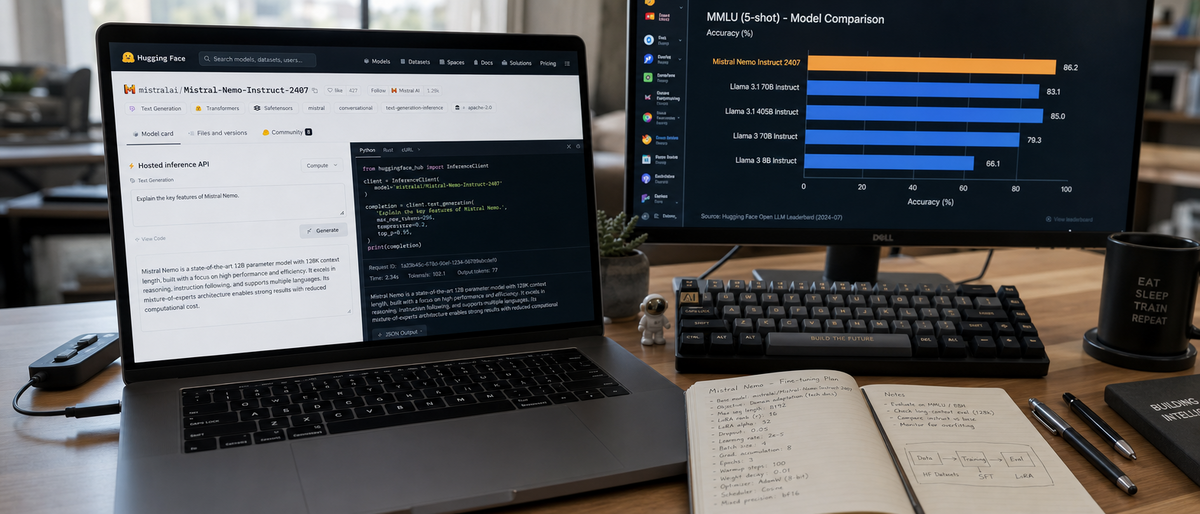

Mistral AI Review 2026: Ultimate Hands-On Analysis of Open-Source Model Performance, Fine-Tuning, and Deployment Options

Best Local AI for Mac 2026: Ultimate Hands-On Review After Claude Code Removal – Top Offline LLMs for Privacy and Performance

Ultimate Local LLM Comparison 2026: Ollama vs Gemma 4 on Smartphones – Mobile Benchmarks, Battery Life & Offline Setup

Best No-Code AI Agent Builders 2026: Ultimate Hands-On Review of Top Platforms for Effortless Autonomous Agents and Workflow Automation

Best AI Code Review Tools 2026: Ultimate Hands-On Review of Top Platforms for Automated Code Analysis, Bug Detection, and Developer Collaboration

More open source ai articles

About the Author

Rai Ansar

Founder of AIToolRanked • AI Researcher • 200+ Tools Tested

I've been obsessed with AI since ChatGPT launched in November 2022. What started as curiosity turned into a mission: testing every AI tool to find what actually works. I spend $5,000+ monthly on AI subscriptions so you don't have to. Every review comes from hands-on experience, not marketing claims.