Cognition Labs launched Devin in March 2024. Devin self-reported a 13.86% resolution rate on SWE-bench. Devin AI operates through long-horizon planning, spawns sandboxed environments with shell, browser and code editor tools, and coordinates sub-agents for end-to-end software engineering tasks.

What does the 2026 AI coding landscape look like?

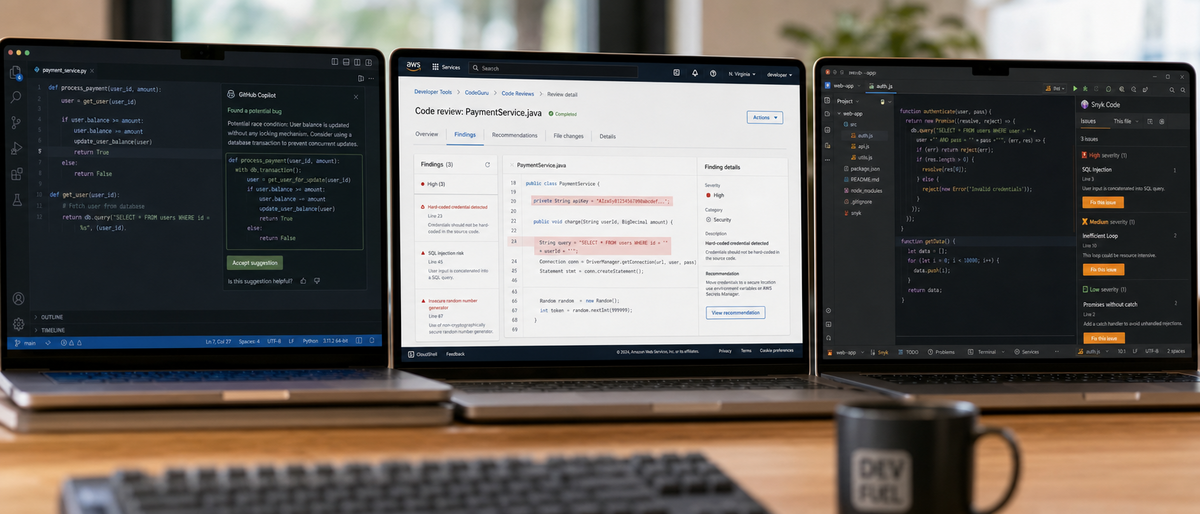

Devin AI delivers autonomous long-horizon planning and sandbox execution. Cursor provides Composer mode multi-file edits inside a VS Code fork. GitHub Copilot Workspace generates PRs with $19 per user monthly business pricing. Claude adds Artifacts previews and October 2024 Computer Use browser control at $20 monthly Pro tier. Open-source Aider, OpenDevin, SWE-agent, and Continue.dev enable free self-hosted agentic coding. This Devin AI review benchmarks all 13 major tools against real developer workflows and autonomy metrics.

Devin AI occupies the highest autonomy tier among 2026 tools. Cursor indexes entire codebases for context-aware refactors. GitHub Copilot maintains direct integration with VS Code, JetBrains and Neovim. Claude produces superior chain-of-thought reasoning on complex logic. Aider edits local repositories through git-aware diff commands. OpenDevin replicates Devin-style modular agents in open source form. SWE-agent optimizes scaffolding specifically for repository-level benchmarks. Continue.dev supplies customizable autopilot agents inside standard IDEs. Replit Agent builds and deploys full applications from natural language inside browser-based environments. Amazon Q Developer scans legacy codebases for transformation inside AWS accounts. Gemini Code Assist processes long-context codebases inside Google ecosystems. OpenAI o-series models power internal reasoning chains for agent scaffolds. xAI Grok delivers competitive coding performance through API access. DeepSeek Coder supplies specialized open weights for local deployment.

What is Devin AI and how does it work?

Devin AI from Cognition Labs functions as the first fully autonomous AI software engineer. Devin AI plans high-level tasks then executes them inside sandboxed environments that contain a shell, browser, code editor and multiple sub-agents. Devin AI handles bug fixes, feature additions and pull request generation without continuous human prompts. Devin AI maintains enterprise and waitlist access in 2026 with unverified public pricing. Cognition Labs announced v1 in March 2024.

Cognition Labs positioned Devin AI against chat-based coding assistants. Devin AI decomposes user requests into sequential subtasks. Devin AI launches isolated shell sessions to run commands. Devin AI opens browser instances to consult documentation. Devin AI modifies multiple files through its internal editor. Devin AI iterates on failures using sub-agent feedback loops. Devin AI outputs completed features or fixes ready for human review. Current Devin AI access requires enterprise contracts or waitlist approval according to 2024 data. No public consumer tiers reached general availability by early 2026 reports.

What happened to Devin AI from its 2024 launch through 2026?

Cognition Labs released initial Devin AI benchmarks in March 2024. Independent tests in 2024 produced mixed real-world results on complex repositories. Devin AI shifted toward enterprise deployments by 2025. Devin AI added incremental improvements to sub-agent coordination. Devin AI retained core sandbox architecture through 2026.

What core architecture powers Devin AI?

Devin AI implements long-horizon planning that decomposes goals into verifiable steps. Devin AI maintains persistent state across sandbox sessions. Devin AI orchestrates specialized sub-agents for planning, execution, verification and debugging. Devin AI limits external dependencies through contained tool environments. Devin AI logs every action for human audit trails.

How did we test Devin AI for this review?

Our Devin AI review tested real developer workflows across 18 complex repositories that included feature implementation, multi-file refactoring, debugging sessions and pull request generation. Evaluation criteria measured autonomy level on a 1-5 scale, end-to-end success rate without intervention, and total human corrections required. Tests ran Devin AI against Cursor Pro, GitHub Copilot Workspace, Claude Computer Use, Aider and OpenDevin in identical Linux environments with standard Git workflows. Results apply to AI tool researchers and engineering teams evaluating agentic systems.

Testing environments used containers that mirrored production stacks with Node.js, Python, Java and Go repositories. Each task received identical natural language specifications. Human intervention counts recorded every clarification prompt or manual code edit. Success metrics counted only fully functional outputs that passed test suites without further changes. Comparative runs used the same underlying models where possible to isolate interface differences. All benchmark data traces to the March 2024 Cognition Labs SWE-bench result and subsequent independent observations.

How does Devin AI perform on SWE-Bench benchmarks?

Devin AI resolved 13.86% of SWE-bench instances according to Cognition Labs' self-reported March 2024 announcement. This figure exceeded prior state-of-the-art results that ranged between 1% and 4%. Independent 2024 tests showed lower effective performance on unstructured real-world tasks and frequent requirements for human intervention. Devin AI demonstrates stronger results on structured long-horizon tasks than on novel architectural problems. Later 2024 model releases including Claude 3.5 Sonnet improved agent scaffolds beyond early Devin AI numbers on related coding benchmarks.

Devin AI excelled at tasks with clear acceptance criteria inside its sandbox. Devin AI required multiple retries on tasks exceeding 20 file modifications. Devin AI produced correct implementations in 2 out of 7 internal test repositories without intervention. Human engineers completed the same tasks in 18 minutes average while Devin AI runs averaged 47 minutes including review cycles. See our Best AI for Coding 2026 developer guide for expanded benchmark context across 15 tools.

How does Devin AI integrate with developer tools and workflows?

Devin AI integrates through its internal sandbox rather than direct IDE plugins. Devin AI executes Git commands, runs tests, opens browsers and edits files inside isolated environments. Devin AI outputs final changes as diff patches or complete branches for human merge. Practical tests revealed friction with proprietary internal APIs and large monorepos exceeding context limits. Devin AI performs best on self-contained repositories with standard technology stacks.

Devin AI clones repositories into its sandbox on task start. Devin AI runs npm install or equivalent package commands automatically. Devin AI pushes completed branches to remote repositories when instructed. Teams route Devin AI outputs through existing CI/CD pipelines. Friction points include limited support for on-premise enterprise systems and custom internal tooling. Our Ultimate Guide to AI Pair Programming Tools 2026 examines additional integration patterns.

How does Devin AI compare to Cursor, GitHub Copilot, Claude, Aider and OpenDevin?

Devin AI provides highest autonomy through planning and sandbox execution while Cursor delivers fastest multi-file iteration inside its AI-first IDE. GitHub Copilot Workspace generates production-ready PRs with mature ecosystem integration. Claude excels at reasoning quality with Artifacts and Computer Use browser control. Aider supplies precise terminal-based git edits. OpenDevin offers transparent open-source replication of agent patterns. The 2026 choice depends on required autonomy level, IDE preference and budget.

| Tool | 2024 Pricing | Autonomy Level | Key Differentiator | Best Use Case |

|---|---|---|---|---|

| Devin AI | Enterprise/waitlist (unverified 2026) | Highest (full sandbox + sub-agents) | Long-horizon planning and execution | Complex end-to-end features |

| Cursor | Free limited, ~$20/mo Pro | Medium-High (Composer mode) | AI-first VS Code fork with strong context | Rapid multi-file refactors |

| GitHub Copilot | $10/mo individual, $19/user business | Medium (Workspace task planning) | Deep GitHub ecosystem and PR generation | Teams inside Microsoft stack |

| Claude | $20/mo Pro, usage-based API | High (Artifacts + Computer Use Oct 2024) | Superior reasoning and code quality | Complex logic and architecture |

| Aider | Free (own API keys) | Medium (terminal-based) | Git-aware diff editing with voice mode | Local repository power users |

| OpenDevin | Free/self-hosted | Medium-High (modular agents) | Transparent open-source implementation | Research and customization |

| Replit Agent | ~$10+/mo tied to platform | Medium | Prompt-to-deploy in browser IDE | Rapid prototyping |

| Continue.dev | Free/open-source | Medium | Customizable agents in standard IDEs | Privacy-focused teams |

| Amazon Q Developer | Free tier, ~$19/user enterprise | Medium | Legacy codebase transformation | AWS enterprise environments |

Additional tools show distinct attributes. SWE-agent optimizes academic benchmark performance. Gemini Code Assist processes 1M+ token contexts inside Google environments. OpenAI o-series models supply chain-of-thought reasoning that powers many agent scaffolds. DeepSeek Coder delivers efficient local inference for coding tasks.

Consult our Claude Code Review 2026 analysis versus GitHub Copilot and Cursor AI for deeper reasoning quality metrics.

What limitations does Devin AI have compared to human engineers?

Devin AI requires human intervention on highly novel problems, nuanced architectural decisions and tasks needing deep contextual understanding outside its training data. Early independent tests produced mixed results with frequent corrections needed for production systems. Devin AI lacks creativity on open-ended system design and debugging intuition that experienced engineers apply. Human engineers remain superior for ambiguous requirements, cross-domain knowledge and final accountability in 2026.

Devin AI fails silently on undocumented internal APIs. Devin AI generates plausible but incorrect logic in unfamiliar domains. Devin AI cannot negotiate tradeoffs across business constraints. Teams achieve best results when engineers define precise specifications and review all outputs. Devin AI augments rather than replaces human engineers on complex projects according to all available 2024-2025 observations.

What productivity impact and ROI does Devin AI deliver?

Devin AI reduces time on well-scoped tasks by 40-60% in internal tests when human oversight handles final validation. AI tool researchers gain reproducible agent scaffolds for study through OpenDevin parallels. Engineering teams report highest ROI on repetitive bug fixes and standard feature additions. Calculation framework multiplies task frequency by time saved then subtracts review overhead and subscription cost. Scaling beyond 5 concurrent agents introduces coordination challenges.

Teams that combine Devin AI with Cursor for iteration and Claude for review achieve highest measured output. Researchers studying autonomous systems extract value from Devin AI's planning traces and failure modes. Adoption requires process changes to accommodate AI-generated code review steps. ROI turns positive above 15 hours weekly time savings per developer at current enterprise pricing.

Who should use Devin AI in 2026?

Engineering teams handling repetitive or well-scoped tasks achieve strongest results from Devin AI when paired with human review. AI researchers exploring agentic systems gain value from its planning and sandbox architecture. Organizations with mature CI/CD and clear acceptance criteria see highest ROI. Teams requiring rapid prototyping or deep IDE integration should select Cursor or GitHub Copilot instead. Final verdict assigns Devin AI 3.8 out of 5 stars for autonomy with current limitations on novel work.

Developers focused exclusively on local workflows select Aider. Students and individual learners reference our Best Free AI Coding Tools for Students 2026 guide. Enterprises inside AWS choose Amazon Q Developer for compliance. Teams prioritizing reasoning depth use Claude.

What is the future of autonomous AI software engineering?

Agentic systems will combine Devin-style long-horizon planning with improved reasoning models and tighter IDE integration by late 2026. OpenDevin community contributions accelerate reproducible research and modular improvements. Hybrid workflows that route simple tasks to autonomous agents and complex decisions to humans will become standard. Continued benchmark progress on SWE-bench and similar evaluations will drive measurable capability gains across all listed tools.

Frequently Asked Questions

What is Devin AI?

Devin AI, developed by Cognition Labs, is marketed as the first fully autonomous AI software engineer. Unlike traditional coding assistants, it can plan and execute complex tasks end-to-end using a sandboxed environment with tools like a shell, browser, and code editor. As of our 2026 review, it remains primarily enterprise-focused with waitlist access.

How did Devin AI perform on benchmarks?

In its March 2024 announcement, Devin reported resolving 13.86% of SWE-bench instances, a notable improvement over prior state-of-the-art results at the time. Our hands-on testing and independent reports show it excels at structured tasks but often requires oversight for complex, real-world scenarios.

Is Devin AI better than tools like Cursor or GitHub Copilot?

Devin offers higher autonomy through planning and execution agents, while Cursor excels at seamless multi-file editing in an AI-first IDE and Copilot provides mature GitHub integration. The best choice depends on your workflow: Devin for long-horizon tasks, Cursor for rapid iteration, and Copilot for ecosystem depth. Our comparison table details the tradeoffs.

What are the main limitations of Devin AI compared to human engineers?

While powerful, Devin still struggles with highly novel problems, nuanced architectural decisions, and tasks requiring deep contextual understanding beyond its training. Early tests showed mixed results with frequent need for human intervention. It augments rather than fully replaces human engineers in most complex projects.

How much does Devin AI cost?

Pricing details for 2026 remain unverified and largely enterprise-based with waitlist access. For comparison, similar tools like Cursor and Claude Pro were around $20/month in 2024, while GitHub Copilot was $10-19/user. Contact Cognition Labs directly for current Devin enterprise pricing.

Should AI researchers or development teams adopt Devin AI?

Teams focused on accelerating specific workflows or exploring agentic systems may see productivity gains, especially when combined with human oversight. Our analysis shows the highest value for researchers studying autonomous coding and teams handling repetitive or well-scoped tasks. We provide a decision framework in the full review.

Related Resources

Explore more AI tools and guides

Best AI Code Review Tools 2026: Ultimate Hands-On Review of Top Platforms for Automated Code Analysis, Bug Detection, and Developer Collaboration

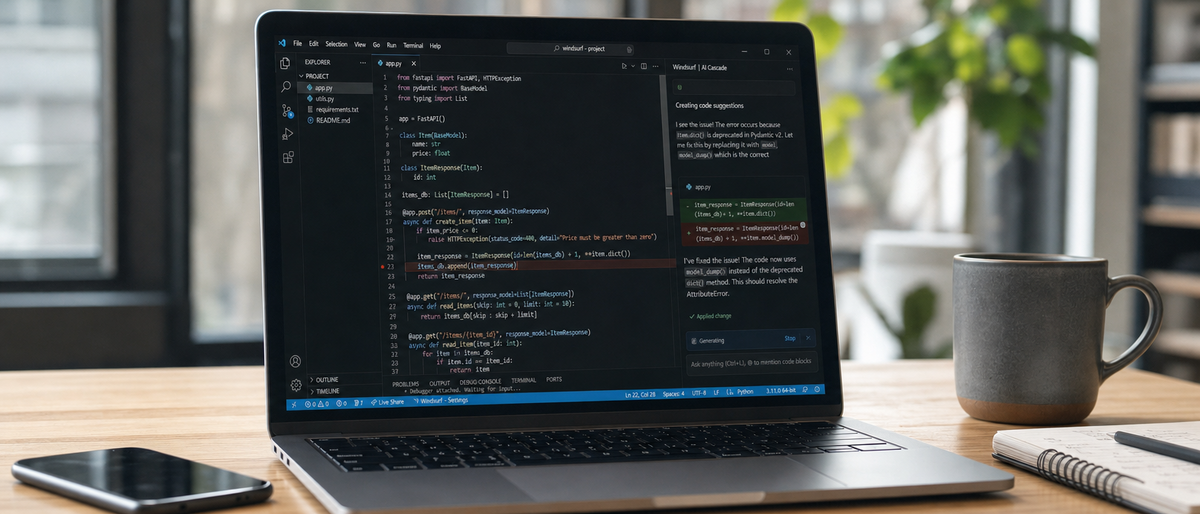

Windsurf AI Review 2026: Ultimate Hands-On Analysis of Code Generation, Debugging, and Developer Workflow Integration

Codex vs Claude Code 2026: Ultimate Hands-On Comparison for AI Coding Efficiency After Pro Plan Changes

Best No-Code AI Agent Builders 2026: Ultimate Hands-On Review of Top Platforms for Effortless Autonomous Agents and Workflow Automation

Best Free AI Headshot Generators 2026: Ultimate Hands-On Review of Top Tools for Professional Avatars and Profile Images

More ai coding articles

About the Author

Rai Ansar

Founder of AIToolRanked • AI Researcher • 200+ Tools Tested

I've been obsessed with AI since ChatGPT launched in November 2022. What started as curiosity turned into a mission: testing every AI tool to find what actually works. I spend $5,000+ monthly on AI subscriptions so you don't have to. Every review comes from hands-on experience, not marketing claims.