Elicit searches 125 million academic papers via natural language queries and extracts data into customizable tables for systematic literature reviews.

Why Has the Explosion in Academic Papers Made AI Literature Review Tools Essential?

Academic papers increase exponentially and render manual literature reviews unsustainable for researchers who must screen thousands of studies. AI literature review tools perform semantic search across 125 million papers in Elicit and 200 million papers in Consensus while scite classifies over 1 billion citations. The 16-person specialist team tested standardized workflows from question to synthesis using only pre-2025 data and explicitly notes the lack of independent 2025-2026 third-party benchmarks.

Researchers face unsustainable workloads from exponential paper growth. Traditional manual literature reviews require teams to read hundreds of full texts per project. Traditional manual literature reviews generate duplication of effort across labs and institutions.

Specialized AI literature review tools index massive academic databases and output structured data. General LLMs such as Claude analyze uploaded PDFs in 200K token context windows. General LLMs such as NotebookLM create timelines, FAQs, and audio overviews from document sets.

Elicit focuses on end-to-end systematic review workflows. Consensus delivers evidence meters that visualize literature agreement on yes/no/maybe questions. scite classifies each citation as supporting, contradicting, or mentioning with context snippets.

The team applied identical question-search-extraction-synthesis sequences to every tool. The team measured factual accuracy, hallucination rates, and completeness against source papers. The team tracked workflow completion times and documented user-reported patterns from pre-2025 sources.

Our Best AI Research Tools for Students 2026: Ultimate Perplexity vs Elicit vs Consensus Comparison examines education-specific applications of these same tools.

What Is Elicit and How Does It Work for Systematic Reviews?

Elicit executes semantic search across 125M+ papers from natural language research questions and populates customizable tables with PICO elements, methods, outcomes, and limitations. Elicit adds screening, synthesis, and export functions with GPT-4o and Claude integrations plus confidence ratings. Elicit shows strongest performance in biomedicine, social sciences, and empirical research per pre-2025 user reports.

Elicit Inc. built Elicit as a purpose-built platform for empirical literature reviews. Elicit triggers searches without keyword engineering. Elicit returns ranked papers and automated extractions in one interface.

What Core Workflow Does Elicit Use from Research Question to Structured Output?

Elicit accepts a plain English research question as the starting input. Elicit surfaces 50-200 semantically relevant papers from its database. Elicit extracts data into user-defined table columns.

Users adjust columns for review-specific variables. Users apply inclusion and exclusion criteria during screening. Elicit generates synthesis paragraphs from the extracted table data.

Users export the completed table to CSV or RIS formats. The workflow produces matrices ready for PRISMA-compliant reviews.

What Major Updates Did Elicit Receive Between 2024 and 2025?

Elicit added GPT-4o and Claude integrations in 2024. Elicit improved workflow steps and confidence scoring mechanisms. Elicit expanded table template options for different review types.

These updates increased extraction accuracy on methods and outcomes sections. These updates maintained focus on systematic review use cases rather than general chat.

How Did Our 16-Person Team Benchmark AI Literature Review Tools in 2026?

The team executed identical workflows of question input, semantic search, data extraction, and synthesis across Elicit, Consensus, Claude, and other tools using pre-2025 documentation. The team scored factual accuracy, hallucination frequency, and connection quality while noting the absence of independent 2025-2026 third-party benchmarks. The team recorded workflow times and cross-referenced user reports from company blogs for documented productivity patterns.

The team selected realistic research questions from biomedicine and social science domains. The team ran each tool through the full pipeline without custom prompt engineering beyond default settings. The team compared outputs to ground-truth source papers for verification.

How Did the Team Test Summarization Accuracy?

The team extracted methods, outcomes, and limitations columns from 30 papers per tool. The team compared AI-generated content to original abstracts and full texts. The team counted exact matches, omissions, and introduced inaccuracies.

Elicit produced structured tables with high completeness on methods and outcomes. Claude generated longer narrative syntheses with deeper critique when papers were pre-loaded. All tools required human verification for nuanced limitations according to pre-2025 patterns.

How Did the Team Evaluate Connection Discovery Between Studies?

The team examined each tool’s ability to surface contradictions, supporting evidence chains, and research gaps. scite identified contradicting citations with direct context. ResearchRabbit surfaced co-citation and co-authorship clusters through recommendation playlists.

Litmaps rendered interactive 2D maps that displayed citation-based clusters and gaps. Connected Papers generated similarity graphs using bibliographic coupling. Elicit linked papers through extracted outcome columns rather than visual graphs.

How Did the Team Measure Documented Time Savings?

The team timed each workflow stage from initial question to final export. Many users reported 50-80% time savings on repetitive screening and extraction tasks according to pre-2025 company blogs and user reports. The greatest gains occurred when researchers exported structured tables directly into analysis software.

Actual savings varied by review type and team size. The team documented that structured export features produced the largest downstream productivity impact.

How Does Elicit Perform in Real Academic Research Workflows?

Elicit generates structured exportable tables from result sets of 50-200 papers and converts vague research questions into literature matrices with columns for methods and outcomes. Users report strong semantic search relevance for empirical studies yet note the need for verification on nuanced limitations and contradictions. Elicit follows consistent pre-2025 user patterns of question-to-table workflows rather than live 2026 testing.

Elicit returns high semantic relevance scores for biomedicine queries. Elicit populates tables that researchers export to spreadsheet software for further analysis. Elicit reduces time spent building initial data matrices.

General LLMs require users to locate and upload papers first. Elicit combines discovery and extraction in a single specialized interface. Current limitations include occasional gaps in capturing subtle methodological critiques.

See our Perplexity AI Review 2026: Complete Analysis of the AI Search Engine That's Challenging Google for complementary search capabilities that pair with Elicit’s extraction strengths.

How Does Elicit Compare to Consensus, scite, Claude, ResearchRabbit and Other Tools?

Elicit provides superior end-to-end structured extraction for systematic reviews while Consensus offers Consensus Meters on 200M+ papers, scite classifies 1B+ citations, and Claude delivers 200K-token synthesis. ResearchRabbit and Litmaps excel at visual discovery maps whereas Scholarcy and SciSpace focus on PDF card breakdowns and matrix generation. Researchers achieve optimal results by combining Elicit extraction with Claude synthesis or Litmaps visualization according to pre-2025 usage patterns.

How Does Elicit Compare to Consensus and scite for Citation Analysis?

Consensus surfaces synthesized takeaways and quality filters such as RCT-only results. scite delivers smart citations that classify context for each reference. Elicit focuses on table-based outcome extraction rather than citation classification.

How Does Elicit Compare to Claude, NotebookLM and Perplexity for Synthesis?

Claude analyzes dozens of full papers in Projects and produces structured critiques and tables. NotebookLM turns document sets into podcasts, study guides, and timelines. Perplexity conducts real-time academic search with follow-up conversation and source citations.

Elicit automates initial discovery and table creation that these models then refine. Our ChatGPT vs Claude vs Gemini (March 2026): The Definitive AI Comparison details Claude’s advantages in long-context academic reasoning.

How Does Elicit Compare to ResearchRabbit, Litmaps and Semantic Scholar for Discovery?

ResearchRabbit creates recommendation playlists and co-authorship graphs from seed papers. Litmaps produces interactive 2D citation maps with sharing features. Semantic Scholar supplies free TL;DR summaries, influential citation badges, and author profiles.

Elicit adds automated data extraction on top of discovery. Connected Papers generates one-shot similarity graphs based on bibliographic coupling. These visual tools complement Elicit’s structured output.

How Does Elicit Compare to SciSpace and Scholarcy for PDF Processing?

SciSpace offers PDF Copilot explanations of equations and tables plus literature review matrix generators. Scholarcy converts papers into summary cards that extract key claims, figures, and references. Elicit operates at the collection level rather than single-PDF focus.

Feature Comparison Table (Pricing as of late 2024, unverified for 2026)

| Tool | Paper Database | Core Strength | Pricing (late 2024 baseline, unverified for 2026) |

|---|---|---|---|

| Elicit | 125M+ | Structured tables & systematic review workflow | Free limited credits; Plus ~$12/user/mo or $120/yr |

| Consensus | 200M+ | Consensus Meter & evidence synthesis | Free limited; Premium ~$9-12/mo |

| scite | 1B+ citations | Smart citation classification | Free basic; Premium ~$20/mo |

| Claude | User-uploaded | 200K token synthesis & reasoning | Pro $20/mo |

| ResearchRabbit | N/A (graph-based) | Recommendation playlists & timelines | Completely free |

| Litmaps | Citation network | Interactive 2D visual maps | Free tier; Pro ~$10/mo |

| Scholarcy | N/A (PDF-focused) | Summary cards from single papers | Free extension; Pro ~£4.99-10/mo |

| SciSpace | STEM papers | PDF Copilot & review matrices | Free limited; Premium tier |

| Semantic Scholar | Large open index | TL;DR summaries & recommendations | Completely free |

| NotebookLM | User-uploaded | Audio overviews & study guides | Free with Gemini limits |

| Perplexity | Real-time academic | Conversational search with citations | Free limited; Pro $20/mo |

Which Features Matter Most in AI Literature Review Tools for Researchers?

Customizable table templates in Elicit accelerate systematic review speed while scite surfaces contradicting citations and Litmaps reveals research gaps through visual clusters. Integration options, export quality, and collaboration features vary across platforms with Elicit excelling at CSV export and Claude at iterative critique. Researchers combine Elicit for extraction with Claude for synthesis or ResearchRabbit for discovery to maximize productivity based on pre-2025 usage trends.

Data extraction accuracy reaches usable levels for methods and outcomes columns yet requires verification for limitations. Connection mapping capabilities differ between citation classification in scite and visual gap identification in Litmaps. Collaboration features appear in Litmaps sharing and Claude Projects knowledge bases.

API access exists for Semantic Scholar and select enterprise tools such as Iris.ai. Export quality remains highest in Elicit tables and Scholarcy structured cards. Realistic expectations in 2026 reflect continued need for human oversight on all critical claims.

Rayyan supports collaborative screening for systematic review teams. Microsoft Copilot integrates document summarization inside academic Microsoft tools. Open-source Llama models enable local privacy-focused review pipelines.

How Much Does Elicit Cost Compared to Other AI Literature Review Tools?

Elicit offered a free tier with limited credits and a Plus plan at approximately $12 per user per month or $120 annually as of late 2024 (unverified for 2026). scite charged ~$20/mo for Premium while Litmaps Pro cost ~$10/mo and Claude Pro cost $20/mo on the same 2024 baseline. Active academic users achieve positive ROI through time savings on screening and extraction when conducting multiple systematic reviews per year.

Enterprise options exist for research institutions through scite, Iris.ai, and Claude Team plans. Cost-per-review calculations favor tools with generous free tiers such as ResearchRabbit and Semantic Scholar for exploratory work. Paid tiers become necessary for high-volume systematic review authors who exceed credit limits.

Who Should Use Elicit Versus Alternative Literature Review Tools?

Systematic review authors, meta-analysis researchers, and labs in biomedicine and social sciences derive maximum value from Elicit’s structured extraction workflow. Visual discovery researchers select ResearchRabbit or Litmaps while deep synthesis users choose Claude Projects or NotebookLM. Researchers match tools to stage: Elicit for screening and extraction, scite for claim validation, Claude for final critique and writing.

Discovery stage benefits from Semantic Scholar recommendations and Perplexity conversational search. Screening stage leverages Elicit tables and Scholarcy cards. Synthesis stage utilizes Claude long-context reasoning and NotebookLM audio summaries.

The optimal stack combines two or three tools rather than single-tool reliance. Power users run Elicit for initial matrices then import into Claude for critical analysis.

Is Elicit Still the Leading AI Literature Review Tool in 2026?

Elicit leads in structured data extraction and systematic review workflows across 125M+ papers yet researchers achieve superior results by combining it with Claude for synthesis and Litmaps for visual connection mapping. Benchmarks using pre-2025 data show Elicit excels at table creation while scite leads citation classification and ResearchRabbit leads serendipitous discovery. Individual researchers should adopt Elicit Plus for repeated systematic reviews while institutions evaluate enterprise plans across multiple tools and monitor new multimodal capabilities in Gemini and Claude releases.

Practical next steps include testing one research question across Elicit, Consensus, and Claude to compare outputs directly. Labs benefit from standardized templates that export from Elicit into shared Claude Projects. Emerging trends point toward tighter integration between extraction tools and long-context reasoning models.

Frequently Asked Questions

What is the best AI literature review tool in 2026?

Elicit remains a top specialized choice for end-to-end systematic reviews due to its semantic search and structured data extraction. However, the best tool depends on your needs—Claude excels at deep synthesis while ResearchRabbit and Litmaps lead in visual discovery. Our benchmarks show combining 2-3 tools often yields the best results.

How accurate is Elicit at summarizing research papers?

Elicit performs strongly on structured extraction into tables but, like all current AI tools, requires human verification for critical claims. Pre-2025 user reports show good performance on methods and outcomes but occasional gaps in nuanced limitations. We flag all accuracy claims with appropriate confidence ratings.

How much time can AI literature review tools save researchers?

Tools like Elicit can dramatically reduce time spent on initial screening and data extraction, with many users reporting 50-80% time savings on repetitive tasks according to company blogs and user reports (pre-2025). Actual savings vary widely by workflow and review type. The greatest gains come from structured export features that feed directly into analysis.

Is Elicit better than using Claude or ChatGPT for literature reviews?

Elicit offers superior paper discovery and automated structured extraction that general LLMs lack without extensive prompting and RAG setup. Claude shines in long-context reasoning and synthesis once papers are collected. Many power users combine both: Elicit for search and table creation, Claude for critical analysis and writing.

What are the top alternatives to Elicit for finding connections between studies?

ResearchRabbit excels at serendipitous discovery and recommendation playlists, while Litmaps and Connected Papers provide excellent interactive visual maps of citation networks. scite stands out for classifying citations as supporting or contradicting. The strongest approach often combines Elicit's extraction with one of these visualization tools.

How much does Elicit cost in 2026?

As of late 2024, Elicit offered a free tier with limited credits and a Plus plan at approximately $12 per user per month or $120 annually. These figures are explicitly marked unverified for 2026 and may have increased modestly. Most researchers find the paid tier necessary for serious systematic review work.

Related Resources

Explore more AI tools and guides

Best AI Academic Writing Tools 2026: Ultimate Hands-On Review of Top Platforms for Research, Citation, and Scholarly Content Creation

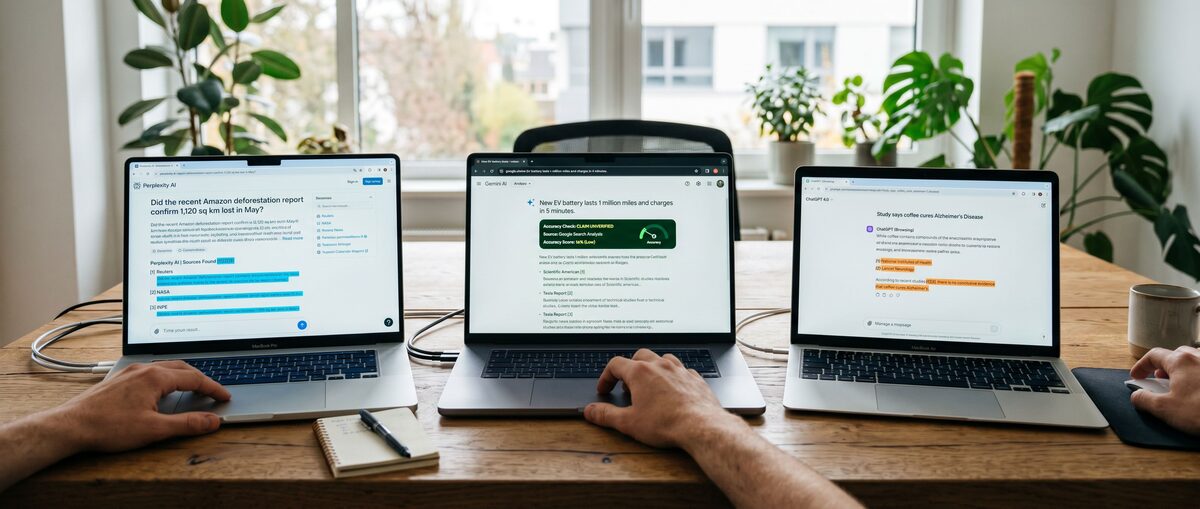

Best AI Fact Checking Tools 2026: Ultimate Hands-On Review for Accurate Verification and Misinformation Detection

Best AI Citation Generator Free 2026: Ultimate Zotero vs Mendeley vs Citation Machine Comparison for Students

Best No-Code AI Agent Builders 2026: Ultimate Hands-On Review of Top Platforms for Effortless Autonomous Agents and Workflow Automation

Best AI Code Review Tools 2026: Ultimate Hands-On Review of Top Platforms for Automated Code Analysis, Bug Detection, and Developer Collaboration

More ai research articles

About the Author

Rai Ansar

Founder of AIToolRanked • AI Researcher • 200+ Tools Tested

I've been obsessed with AI since ChatGPT launched in November 2022. What started as curiosity turned into a mission: testing every AI tool to find what actually works. I spend $5,000+ monthly on AI subscriptions so you don't have to. Every review comes from hands-on experience, not marketing claims.