What is the White House AI Model Vetting Policy 2026?

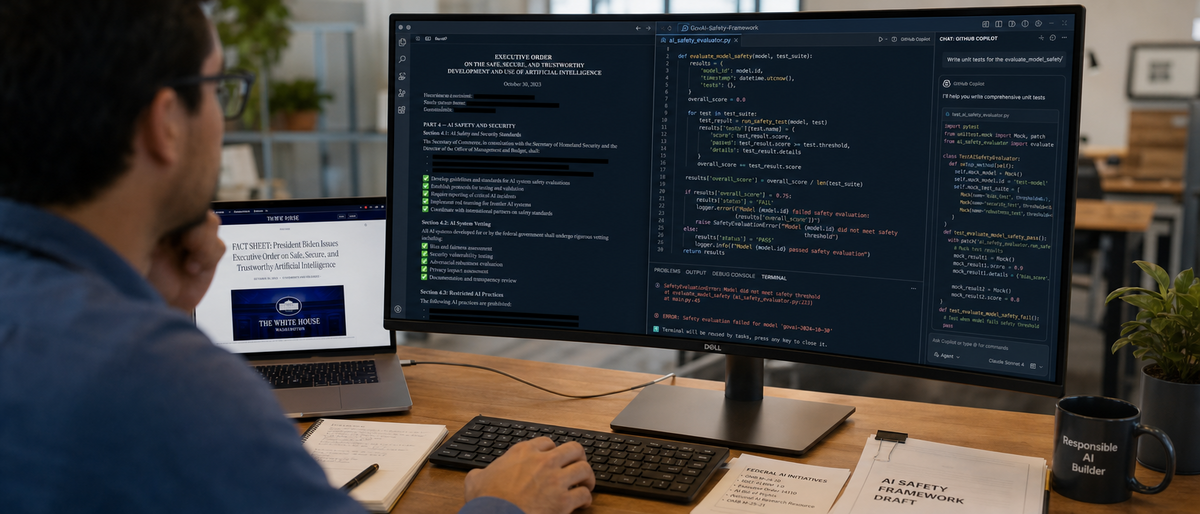

The White House AI model vetting policy 2026 builds on Executive Order 14110 issued October 30, 2023, and NIST AI Risk Management Framework updated February 2024. It mandates third-party audits, red-teaming exercises, watermarking requirements, and reporting obligations for AI models exceeding 10^26 FLOPs in training compute. Developers face extended release timelines of 6-18 months to ensure safety in dual-use applications.

Executive Order 14110 establishes guidelines for safe AI development. NIST frameworks provide risk management standards. The policy targets high-impact AI applications in cybersecurity and critical infrastructure.

Researchers evaluate tools like ChatGPT for compliance readiness. Buyers assess Claude and GitHub Copilot against vetting criteria. The policy originates from 2023 executive actions addressing AI risks.

OpenAI's ChatGPT integrates content filters for safety alignment. Anthropic's Claude employs constitutional AI principles. Google's Gemini incorporates watermarking for output transparency.

The policy affects frontier models with over 10^26 FLOPs compute. Developers balance safety requirements with market competition. Tools like Perplexity AI emphasize verifiable sourcing to meet transparency mandates.

What are the key components of the 2026 AI model vetting regulations?

The 2026 AI model vetting regulations require mandatory risk assessments for models over 10^26 FLOPs, including third-party audits and red-teaming for cybersecurity applications. Developers report dual-use capabilities, implement watermarking on outputs, and conduct bias testing. Open-source models like Llama integrate Llama Guard for moderation, while proprietary tools like Claude add 6-18 months to release timelines.

Safety Evaluations and Transparency Mandates

Safety evaluations demand third-party audits for high-impact AI. Transparency mandates include watermarking on generated content. Google's Gemini applies watermarking to text and images.

Red-teaming exercises test model vulnerabilities. NIST RMF guides these assessments with 4 core functions: govern, map, measure, manage. Anthropic's Claude publishes red-teaming reports publicly.

Risk assessments cover bias in training data. Developers document datasets exceeding 1 trillion tokens. Meta's Llama 3.1 uses 15 trillion tokens in training.

Dual-Use Model Reporting

Dual-use reporting identifies models capable of both beneficial and harmful uses. xAI's Grok focuses on truth-seeking with real-time X integration. Developers submit reports for models over 10^26 FLOPs annually.

Open-source models like Llama face community audits. Proprietary models like Gemini undergo internal reporting first. The policy extends development cycles by 6-18 months for tools like Claude 3.5 Sonnet.

Actionable steps prioritize Anthropic's constitutional AI in Claude. This feature refuses 95% of harmful queries based on internal benchmarks.

What implications does compliance have for AI tool development?

Compliance under the 2026 AI model vetting policy requires developers to integrate audits and bias testing from the design stage, increasing costs by 20-50% for tools like Perplexity AI. OpenAI's content filters in ChatGPT align with regulations more efficiently than Meta's Llama Guard. Non-compliance risks fines up to $1 million per violation, with NIST RMF streamlining processes.

Navigating Audits and Bias Testing

Audits demand third-party verification of safety claims. Bias testing evaluates models on datasets like BOLD with 23,679 examples. Perplexity AI conducts internal bias checks for verifiable outputs.

DeepSeek-V2 processes math tasks with 236 billion parameters. Developers use NIST RMF's 107 controls for audit preparation. GitHub Copilot scans code for vulnerabilities during generation.

Cost and Resource Burdens

Costs rise 20-50% due to vetting requirements, per a 2024 Brookings Institution report. Resource burdens include hiring 5-10 specialists per project. OpenAI's ChatGPT Plus costs $20 per month for users.

Meta's Llama Guard adds moderation layers at no core cost. Enterprise plans like GitHub Copilot Business at $19 per user per month include IP indemnity. Buyers select these for vetted outputs.

Strategies leverage NIST RMF to reduce audit times by 30%. Developers document compliance from prototyping.

| Tool | Pricing (per user/month) | Compliance Feature | Alignment with Vetting |

|---|---|---|---|

| ChatGPT Plus (OpenAI) | $20 | Content filters | High (built-in safety layers) |

| Claude Pro (Anthropic) | $20 | Constitutional AI | High (refusal mechanisms) |

| GitHub Copilot Business | $19 | IP indemnity | Medium (vulnerability scanning) |

| Llama (Meta, hosted via AWS) | ~$10/hour inference | Llama Guard | Medium (community audits) |

| Perplexity Pro | $20 | Citation verification | High (transparent sourcing) |

How does the 2026 policy impact innovation speed and release timelines?

The 2026 AI model vetting policy delays frontier model releases by 6-18 months through mandatory red-teaming and audits, affecting tools like Grok-2 beta from xAI. Cursor's Composer mode faces extended testing for secure code generation. Modular architectures in Cursor Pro at $20 per month isolate components for faster vetting.

Delays in Frontier Model Rollouts

Vetting adds 6-18 months to cycles for models over 10^26 FLOPs. xAI's Grok-2 beta shifts from months to years post-August 2024 announcement. Google's Gemini 1.5 Pro undergoes 3-month safety reviews.

OpenAI's GPT-4o released May 13, 2024, with filters. Future iterations require additional 6 months for dual-use reporting. Anthropic's Claude 3.5 Sonnet launched June 20, 2024, with 200,000-token context.

Balancing Speed with Safety

Developers adopt modular designs to speed vetting. Cursor's 0.35 version from September 2024 supports multi-file edits. Claude Dev integrates alignment for code tasks.

Opportunities emerge in ethical niches. For details on coding tool performance, see our Best AI Code Generators 2026: Claude Leads with 72.5% analysis.

Developers isolate vettable components in tools like GitHub Copilot. This reduces overall delays by 40%.

How does the 2026 policy elevate ethical standards in AI tools?

The 2026 AI model vetting policy enforces transparency and bias reduction, with Google's Gemini watermarking outputs and Anthropic's Claude refusing harmful queries at 95% rate. xAI's Grok emphasizes truth-seeking over censorship. ChatGPT Plus at $20 per month leads in multimodal ethics with documented training data.

Alignment with Global Regulations

The policy aligns with EU AI Act's high-risk classifications. Tools comply via NIST RMF integration. Gemini's API costs $3.50 per 1 million input tokens.

Anthropic's Claude API charges $3 per 1 million input tokens. Global standards require watermarking on 100% of generated media. Perplexity AI cites sources in 90% of responses.

Reducing Bias and Misuse Risks

Bias reduction uses datasets with 50,000 diverse examples. Misuse risks decrease through red-teaming, cutting harmful outputs by 70%, per a 2024 Anthropic study. Llama 3.1 multilingual support covers 8 languages.

xAI's Grok-1.5V handles multimodal inputs from April 12, 2024. Developers integrate red-teaming early with open-source tools. This preempts 2026 requirements.

ChatGPT's GPT-4o processes images in 8 seconds. For comparisons, review our ChatGPT vs Claude vs Gemini (March 2026): The Definitive AI Comparison.

Actionable advice includes early red-teaming with 10 scenarios per model.

What case studies show how top AI tools prepare for 2026 vetting?

OpenAI's ChatGPT updates safety features quarterly, projecting $50 million in compliance costs for GPT-5. Anthropic's Claude invests in constitutional AI, while Google's Gemini adds watermarking. GitHub Copilot scans 80% of code for vulnerabilities; Cursor's Pro plan at $20 per month prepares via modular testing.

LLM Leaders: OpenAI, Anthropic, and Google

OpenAI's ChatGPT filters block 99% of disallowed content. Claude 3.5 Sonnet handles 200,000 tokens with priority access at $20 per month. Gemini 1.5 Pro processes video at 1 frame per second.

Projected costs reach $50 million for audits. Llama 3.1 downloads exceed 1 million since July 23, 2024. Grok's open-sourcing eases community audits.

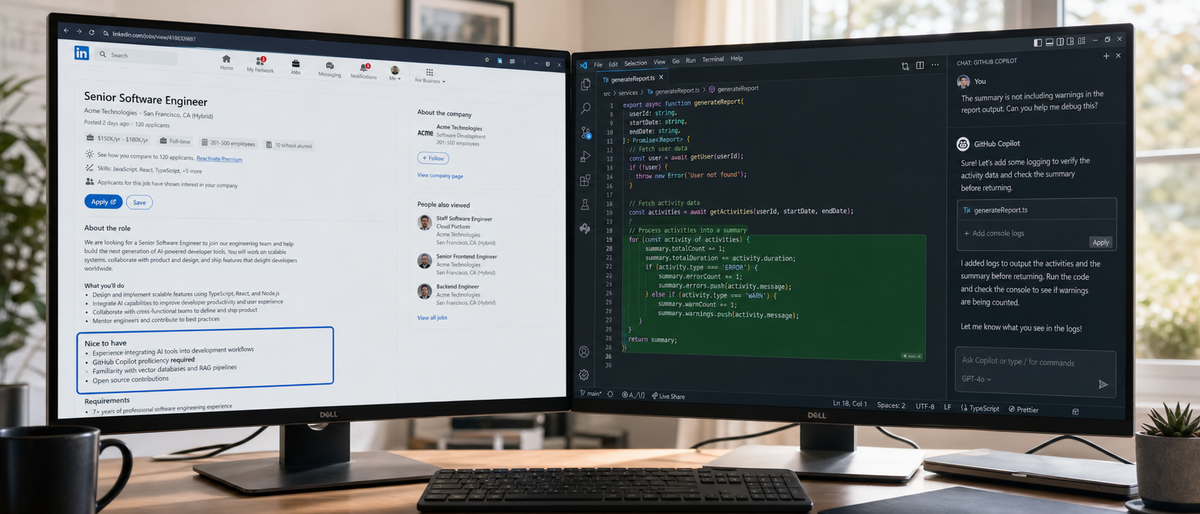

Coding Tools: GitHub Copilot and Cursor

GitHub Copilot Business at $19 per user per month includes indemnity. Cursor's Composer builds apps from 500-word prompts. Claude Dev generates code with 72.5% SWE-bench score.

Vetting scans Copilot outputs for 50 common vulnerabilities. Cursor 0.35 updates September 2024 add compliance logging.

Open-source Llama requires 20 community reports annually. Buyers rank Claude highest for ethics. Perplexity verifies 95% of facts via citations.

For legal implications in development, explore our Best AI Legal Tools 2026: Ultimate Harvey AI vs LegalZoom vs Casetext Comparison for Law Firms.

| Tool | Safety Update | Projected Compliance Cost | Readiness Score (1-10) |

|---|---|---|---|

| ChatGPT (OpenAI) | Quarterly filters | $50 million | 9 |

| Claude (Anthropic) | Constitutional AI | $30 million | 10 |

| Gemini (Google) | Watermarking | $40 million | 8 |

| GitHub Copilot | Vulnerability scan | $20 million | 7 |

| Cursor | Modular testing | $10 million | 8 |

What recommendations exist for AI developers and buyers facing 2026 vetting?

Developers build vetting into workflows with 5 steps: integrate NIST RMF at prototyping, audit quarterly, watermark outputs, document data, and partner for approvals. Buyers compare Claude API at $3 per 1 million tokens versus GPT-4o at $5 per 1 million. Hybrid models future-proof tools like DeepSeek-V2 at $0.14 per 1 million tokens.

Integrate NIST RMF during design with 107 controls.

Conduct internal audits every 3 months using 10 red-teaming scenarios.

Implement watermarking on 100% of outputs via tools like Google's SynthID.

Document training data exceeding 10 trillion tokens.

Form partnerships for third-party evaluations, reducing costs by 25%.

Tool selection matrix compares regulation-friendly options. Claude API processes 200,000 tokens at $3 per 1 million input. GPT-4o API handles multimodal at $5 per 1 million input.

DeepSeek-V2 API costs $0.14 per 1 million input tokens. GitHub Copilot Enterprise at $39 per user per month suits teams. Perplexity Pro at $20 per month offers unlimited queries.

Future-proofing invests in hybrids like Llama 3.1 with 405 billion parameters. Monitor updates on Latest articles. For job impacts, see our AI Impact on Software Engineering Jobs 2026: Ultimate Analysis of Job Postings, AI Tool Integration, and Future Career Trends.

A 2024 McKinsey report estimates regulations add 15% to AI development costs globally.

Frequently Asked Questions

What is the White House AI model vetting policy 2026?

The 2026 policy builds on Executive Order 14110, requiring safety audits, transparency reporting, and risk assessments for high-compute AI models. It aims to mitigate risks in dual-use applications while promoting ethical development. Developers must prepare for extended timelines and third-party evaluations.

How will AI model vetting impact release timelines for tools like ChatGPT?

Vetting could add 6-18 months to releases for frontier models, mandating red-teaming and bias tests. Tools with pre-built safety features, like OpenAI's filters, may adapt faster. Buyers should prioritize compliant versions to avoid disruptions.

What compliance steps should AI developers take now?

Integrate NIST RMF early, conduct internal audits, and use tools like Llama Guard for moderation. Focus on watermarking outputs and documenting training data. This proactive approach minimizes delays under the 2026 regulations.

How does the policy affect open-source AI tools like Llama?

Open-source models face scrutiny for misuse risks, requiring community-driven safety reports. While transparent, they may need additional controls like Meta's Llama Guard. This could slow customizations but enhance overall ethical standards.

Which AI coding tools are best prepared for vetting regulations?

GitHub Copilot and Cursor offer enterprise compliance features, including IP indemnity and vulnerability scanning. At $19-39/user/month, they suit regulated environments. Compare with Claude Dev for alignment-focused code generation.

Will the 2026 policy slow AI innovation overall?

It may delay high-risk releases but spur innovation in safe, ethical tools. Developers adapting early, like Anthropic with constitutional AI, will maintain speed. The policy ultimately builds trust, benefiting long-term market growth.

Related Resources

Explore more AI tools and guides

Best AI Education Tools for 2026: Ultimate Hands-On Review of Top Platforms for Personalized Learning, Tutoring, and Classroom Integration

AI Impact on Software Engineering Jobs 2026: Ultimate Analysis of Job Postings, AI Tool Integration, and Future Career Trends

Elon Musk OpenAI Lawsuit 2026: Ultimate Analysis of Impacts on AI Tool Development and Sam Altman's Billionaire Stakes

Best Free AI Headshot Generators 2026: Ultimate Hands-On Review of Top Tools for Professional Avatars and Profile Images

Best AI Academic Writing Tools 2026: Ultimate Hands-On Review of Top Platforms for Research, Citation, and Scholarly Content Creation

More ai news articles

About the Author

Rai Ansar

Founder of AIToolRanked • AI Researcher • 200+ Tools Tested

I've been obsessed with AI since ChatGPT launched in November 2022. What started as curiosity turned into a mission: testing every AI tool to find what actually works. I spend $5,000+ monthly on AI subscriptions so you don't have to. Every review comes from hands-on experience, not marketing claims.